- Featured

- For You

China’s Alternative Order: And What America Should Learn From It

By now, Chinese President Xi Jinping’s ambition to remake the world is undeniable. He wants to dissolve Washington’s network of alliances and purge what he dismisses as “Western” values from international bodies. He wants to knock the U.S. dollar off its pedestal and eliminate Washington’s chokehold over critical technology.

The Five Futures Of Russia: And How America Can Prepare For Whatever Comes Next

Vladimir Putin happened to turn 71 last October 7, the day Hamas assaulted Israel. The Russian president took the rampage as a birthday present; it shifted the context around his aggression in Ukraine. Perhaps to show his appreciation, he had his Foreign Ministry invite high-ranking Hamas representatives to Moscow in late October, highlighting an alignment of interests.

Margaret E. Raymond On Proven Results: Highlighting The Benefits Of Charter Schools For Students And Families

What is MyHoover?

MyHoover delivers a personalized experience at Hoover.org. In a few easy steps, create an account and receive the most recent analysis from Hoover fellows tailored to your specific policy interests.

Watch this video for an overview of MyHoover.

Forgot Password

Login?

Great Powers Don’t Mind Their Own Business, They Shape The Future, Says Condoleezza Rice At 2024 Drell Lecture

Hoover Institution Director Condoleezza Rice gave a speech titled “What Does America Stand For?” Rice discussed the economic, political, and military systems championed by the US following World War II, how those systems are being challenged, and what America’s role in the world should be today.

Sustained Debt Reduction: The Jamaica Exception

Sharp, sustained reductions in public debt are exceptional, especially recently. We know this because public-debt-to-GDP ratios have been trending up in advanced countries, emerging markets, and developing countries alike.

What is MyHoover?

MyHoover delivers a personalized experience at Hoover.org. In a few easy steps, create an account and receive the most recent analysis from Hoover fellows tailored to your specific policy interests.

Watch this video for an overview of MyHoover.

Forgot Password

Login?

Commentary on the News

The Second Cold War Is Escalating Faster Than The First

To understand what is at stake in the fight against the axis of China, Russia and Iran, just read “The Lord of the Rings.”

Core Institutional Priorities

Featured Fellows

FEATURED PUBLICATIONS

Strategika

An online journal that analyzes ongoing issues of national security in light of conflicts of the past.

Read More

The Caravan

The Caravan is a quarterly publication on the contemporary dilemmas of the Greater Middle East

Read More

California on Your Mind

Analysis, politics, and the economics of the Golden State

Read MoreCalifornia Loses Nearly 10,000 Fast-Food Jobs After $20 Minimum Wage Signed Last Fall

More Videos & Podcasts

The Russian Opposition And Ukraine: A Conversation With Vladimir Milov

In this episode of Battlegrounds, H.R. McMaster and Vladimir Milov discuss the war in Ukraine, the status of the Russian opposition, and prospects for the restoration of peace, Wednesday, April 10, 2024.

The Rise Of The Machines: John Etchemendy And Fei-Fei Li On Our AI Future

John Etchemendy and Fei-Fei Li are the codirectors of Stanford’s Institute for Human-Centered Artificial Intelligence (HAI), founded in 2019 to “advance AI research, education, policy and practice to improve the human condition.” In this interview, they delve into the origins of the technology, its promise, and its potential threats. They also discuss what AI should be used for, where it should not be deployed, and why we as a society should—cautiously—embrace it.

America’s Immigration Puzzle, Iran Strikes (Out) – And 60 Is The New 40

Nearly 40 years since the nation last saw comprehensive reform on the matter, the consensus is that America’s immigration system is sorely in need of updating to 21st-century realities. Reihan Salam, Manhattan Institute president and author of the book Melting Pot or Civil War?, joins Hoover senior fellows Niall Ferguson, John Cochrane, and H.R. McMaster to discuss a smarter approach to welcoming newcomers to America.

Revisiting Freedom’s Cause: David Davenport And Checker Finn On Rejuvenating Civic Education

Better ways to engage K-12 and college students in the understanding and appreciation of the concept of “life, liberty, and the pursuit of happiness.”

Library & Archives

A World-Class Library & Archives

Founded by Herbert Hoover in 1919, the Hoover Institution Library & Archives is home to some of the world's most renowned collections documenting war, revolution, peace, and political, economic, and social change in the twentieth and twenty-first centuries.

Free and open to all, discover how to search the collections, arrange a research visit, or explore exhibitions by clicking below.

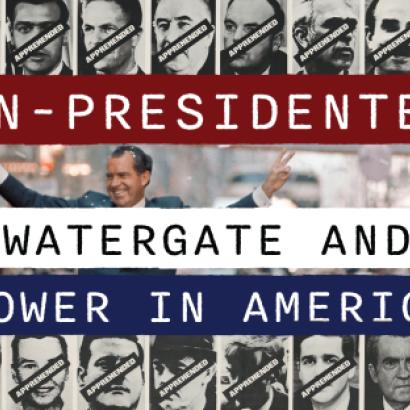

Exhibitions | Now On View

The exhibitions Un-Presidented: Watergate and Power in America (February 12–August 11, 2024) and Hoover@100: Ideas Defining A Century (ongoing) are open and free to all visitors to Hoover Tower, at the heart of Stanford University campus.

Learn More

Research Services

Planning an onsite visit to the reading room? Conducting your research from afar? Staff are ready to connect you with the most relevant materials through reference consultations, assisting with registration and material requests, digitization, and more.

Learn MoreThe Collections

Acquiring, preserving, and making accessible collections of enduring value, including more than one million library volumes and over six thousand archival collections.

Digital First Initiative

Our aim is to make full archival collections accessible to researchers around the world through the digitization of textual, graphical, sound, and moving-image materials.

Engagement & Outreach

Building connections to our collections by sparking curiosity in audiences interested in the meaning and role of history through exhibitions, classes, tours, and special programing.