PARTICIPANTS

Yevgeniy Teryoshin, John Taylor, Annelise Anderson, Michael Bordo, Michael Boskin, Matthew Canzoneri, Pedro Carvalho, John Cochrane, Steven Davis, Andy Filardo, Paul Gregory, Laurie Hodrick, Robert Hodrick, Evan Koenig, Don Kosh, Jeff Lacker, Michael Melvin, Axel Merk, Alexander Mihailov, David Papell, Charles Plosser, Laust Saerkjaer, Pierre Siklos, Christine Strong, Jack Tatom, George Tavlas

ISSUES DISCUSSED

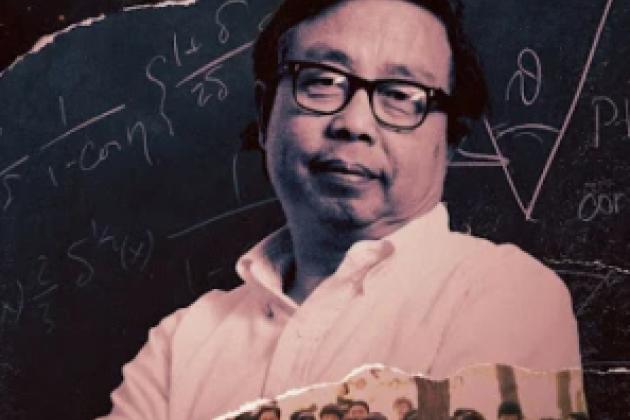

Yevgeniy Teryoshin, Assistant Professor of Economics at the University of Nevada, Las Vegas, discussed “Historical Performance of Rule-Like Monetary Policy.”

John Taylor, the Mary and Robert Raymond Professor of Economics at Stanford University and the George P. Shultz Senior Fellow in Economics at the Hoover Institution, was the moderator.

To read the paper, click here or here

To read the slides, click here

WATCH THE SEMINAR

Topic: The Historical Performance of Rule-Like Monetary Policy

Start Time: April 19, 2023, 12:15 PM PT

>> John Taylor: We're very happy to have Yevgeniy Teryoshin speak to us today, all the way from Las Vegas. We wish we were there, seems like the weather is warmer we do a little gambling, except we wouldn't. But Yevgeniy got his PhD here 2018 is what I have and today you're going to talk about historical performance of rule like monetary policy.

We have a copy of your paper, the slides you're gonna use, so we have a good group anxious to hear what you have to say. This is the Hoover Institution, in case you're wondering and we're welcome. You're welcome to be here go ahead.

>> Yevgeniy Teryoshin: All right, thank you, John, for inviting me to talk at this working group.

And also, I just wanna say that it's kind of hard to see everyone in the conference room picture so if you have any questions, please interrupt me. I might not be able to see kind of your physical movements to stop for the questions, but I'm more than happy to answer anything you may have.

And so to start off with, I just wanted to talk a little bit about where this paper comes from. And so the initial idea for this paper was from a paper that David Pappel, who's also here today, presented at the Hoover, one of the Hoover machine policy conferences that John's organized.

And in that paper, kinda they showed, David and his co author showed that across the post World War I periods where monetary policy was broadly consistent with the Taylor rules, tended to have better economic performances in the United States. And at the time, something that kinda came up to me and kinda the motivation for this paper was, well, yes, that's definitely true from their results.

But it was unclear to me how much of that was kinda a broader trend versus how much of it was that monetary policy during the 1970s looked very much inconsistent with the Taylor rules. But also happened to be kinda during the worst period of our economic performance since World War II, in terms of inflation.

And so the idea behind this paper initially was to take their broad approach and apply it to other countries. And the kinda trying to see, well, if this relationship is gonna hold across all a bunch of countries, then it's probably something more robust. If it's only true for the United States, perhaps the 1970s period is kinda driving the results and we might not wanna extrapolate too much from it.

So that kind of got me thinking about this and so let me start off. So first I wanna briefly talk about is, what exactly are deviations from policy rules? So the point of this paper is to argue that at least historically, periods where standard policy rules were kind of recommendations that are very different from actual policy had bad outcomes.

But for that you have to kinda think about what exactly is a deviation from a policy rule. And in the slide, I have two different policy rule recommendations. This is from a Ben Bernanke post in the Brook Institution in which he was arguing that monetary policy in the early 2000s was actually rules based, kind of in contrast to what John usually was talking about at that time, suggesting that March policy was too loose prior to the financial crisis.

And so on the left hand side, I have kind of the original Taylor rule in the dotted in black and the policy rule recommendation with the original Taylor rule and using the GDP deflator as the measure of inflation. And we can kinda see here in this period of the early 2000s, that Taylor rule kinda in that specification suggested that monetary policy should have been tighter during that time period and there were substantial deviations.

Then what Bernanke argues in his post is that if we make a few changes, we allow for a modified Taylor rule that cares more about output stability. We switched the GDP deflator to a core PCE measure of inflation. Suddenly the early 2000s look a lot more rule like or at least consistent with a Taylor rule.

And so, before I get into my results, I wanted to emphasize that in general, the specifics of what assumptions you make both in terms of the rules. But also in the underlying measures of inflation and output really matter for the specific policy recommendations. But then what I'm gonna spend the rest of my talk talking about is arguing that it doesn't really matter at the end of the day.

At the end of the day for the big picture results, I wanna show they're robust to a variety of specifications and so let me get into that. So first, I deviate from a lot of the literature in talking about this as rule like rather than rule based. And I just wanted to briefly state what exactly do I mean by rule like monetary policy.

So when I talk about rule-like monetary policy, conceptually, I'm thinking about policy that is broadly consistent with a variant of a standard policy rule, either the original or the modified tailor and does so for a protracted period of time. That's kinda conceptually what I'm getting at obviously, none of these central banks are actually following policy rules like these.

So I don't wanna argue that this is rules based policy, it's just broadly consistent with a rule. And so that's why I'm going with the rule like, and in terms of quantifying this, I'm gonna be thinking about this as the absolute deviation from a policy rule recommendation and the actual interest rate at a given point in time and this kinda just to get a firm ratio.

But generally speaking, I'm thinking about things that if the policy rule recommendation is within 200 basis points of the actual interest rate. That's broadly rule like more than 200 basis points, that's gonna be broadly discretionary and that kinda comes out from the results and I'll talk about that later on.

And of course, all of these are gonna be relative to a policy rule and the chosen measures of inflation and output. And I'm gonna try to argue that it's robust to that. Just to preview the results, kinda, my results are gonna be in two parts. One is just a relationship without any clear evidence for causality.

I wanna first kinda argue that overall rule-like monetary policy across that I look at, are associated with greater economic stability. And this is robust across a variety of things, whether you use original or modified Taylor rules, whether you use different choices of output gaps. And furthermore, when I look at more countries than the United States, I find that most of these results are gonna be driven by inflation stability.

Periods of policy that are consistent with Taylor rules have much better inflation stability outcomes, but that doesn't carry over necessarily to output stability. Those results are gonna be much more country by country specific. And then the other point that I would like to make in this paper and which is kinda a much harder their thing to prove or argue, is that there's actually a causal relationship here.

Because in principle, you could imagine that having more rule like policy causes better economic conditions. But it's also possible that in periods of really bad economic conditions, central banks just set policy that is less consistent with the policy rules. And so in terms of policy implications, it's important to have not just a relationship, but a causal one.

And to do this, and I'll admit that I do not have a perfect argument for this kinda, this sort of literature does not have a great way to truly show that. But what I'm going to be using to suggest that causality is going to be one test of Granger causality, which are gonna end up running in both directions.

And two, I'm gonna be thinking about the timing of the structural breaks and thinking about, well, if the structural breaks in my analysis. Let's say that a rule like period starts after monetary policy has changed, that probably suggests that that's an actual and a response to the monetary policy regime.

If there's no change in monetary policy regime and there's a switch because the financial crisis starts, that potentially suggests that these results are being driven with the reverse causality there. And so, I'm gonna have some evidence, it's not gonna be perfect, I'll get to it once I get into the results.

So, I'm not gonna talk a lot about the literature beyond saying that a lot of this is working off of David's work, as well as. The other thing that is important to note is that there's a large literature by Orphanides and co-authors that says that these sort of analysis has to be done using real-time data and that's what's limiting my choice of countries.

So, I'm using countries for which I actually have a decent amount of data in real-time, otherwise, I can have a much bigger sample. But that would kind of end up with policy rule recommendations that are nonsensical because I would be using data that the central banks at the time did not have, so it will be kind of very unfair comparison.

And so, the countries I use are all gonna be countries that have real-time data for at least 20 years or so, get into the details of the methodology. So, I'm gonna be using two policy rules, I'll be kinda once, my main specification is gonna with the original Taylor rule, so this implies 2% real interest rate, 2% inflation target, and a coefficient of a larger coefficient on inflation than output.

I also use as an alternative the modified Taylor rule that Yellen suggests is suggested in the past. That's a more appropriate description of monetary policy that the central bank in the United States at least does, and more consistent with the dual mandate of equally but weighting inflation output stability.

The results are gonna be robust across these, and I'm gonna, in this talk, well, today, I'm gonna focus on the original one, but if you look at the paper, the results are all gonna be there for the modified one as well and they're broadly speaking of us. But to implement this and have policy recommendations for this, I need, one, a measure of inflation in real-time and two, a measure of output gaps.

And so, for inflation, I'm gonna be just using CPI measures of inflation, I need a measure that I actually have for all the countries I'm using. And so core PCE is not something I have for this entire time period for all the countries, CPI is, that's what I'm gonna be using kinda a data limitation issue.

For output, obviously, there's a lot of different measures of output gaps, unfortunately, if I wanna use real-time data, that limits me to primarily making my own output gaps. I cannot use, say, CBO output gaps cuz they're just not a good data for CBO gaps in real-time across this entire time period, much less for other countries.

Output gaps are gonna be generated by thinking about what data was available at that point in time and detrending that data by some univariate filter I'll talk about the filtering bulk. And so, for example, if I wanna have the output gap for 2000 quarter one, I would look at the available data at the time, which would be from 1946 through 1999, quarter four.

Detrend that entire time series using some method, and then use the most recent output gap from that detrending as the, what was the best measure of the outward gap at that point in time.

>> John Cochrane: Can you just tell us a little how you think about employment and unemployment in place of output gaps in this kind of rule, I mean, the Fed's mandate is employment, and unemployment rates have the advantage that you don't have to detrend them.

They directly tell you something about the level of the economy, which is what belongs there.

>> Yevgeniy Teryoshin: So, I guess one answer to that would be that in order to use them fully, you would need to have the rate of unemployment that would also kind of be changing over time for various countries, and that makes it a little bit more hard to implement.

It's definitely doable, I know David has switched to kinda using that in his more recent papers, he uses the unemployment rate or the cyclical unemployment rate as the measure, and I am not averse to that. Initially, I was not thinking about it that way, I do admit there's the potential for it being a better measure for the reasons you said.

But, implementing across many countries requires me to have a good measure of the national rate of unemployment in real-time, which is problematic when I'm looking at many countries.

>> John Cochrane: Since Taylor is with us, what are your thoughts on unemployment or unemployment versus output gaps on that part of the rule.

>> John Taylor: Well, there's quite a close relationship between the two and sometimes the relationship requires estimates of the so-called natural unemployment rate, which can vary over time. But I don't think it will make a lot of difference, I personally thought, okay.

>> Yevgeniy Teryoshin: And for the United States, kinda in David's work, there does not seem to be a lot of difference for that as well.

So, in terms of actually detrending this, the different methods of detrending, I'm gonna be thinking about univariate methods. Kinda given my data limitations when I'm thinking about lots of countries, and the particular method is gonna result in quite different output gaps. And so, David Papell and some of his co-authors, Alexander Nikolsky, have in the past looked and argued that of the univariate filters, the quadratic one seems to do best.

But, when I looked at that, I found that quadratic filter does good well on some dimensions, but not necessarily as well on other dimensions. And so conceptually, the output gaps I wanna use would be the output gaps the Federal Reserve or the respective central bank actually had at that point in time.

For the United States, we have them from the Green Book estimates for a period of time, but not the full date, time period for other countries that do not have it at all from certain countries. And so, what I do is I compare how do various HP filters and the quadratic filter do in terms of how do the output gaps in real-time from these filters compare with the output gaps from the Green Book estimates from the Federal Reserve.

And in general, what I find is first, the standard HP filter does horribly, so in particular, this 1600, this line right here, it shows that the correlation between a standard HP filter for quarterly data lambda is equal to 600. The correlation in output gaps is only 0.3, and the average deviation between what the output gap is from the filter versus the Green Book estimates is about 1.5%.

Quadratic, which is what some of the other literature argued for, has much better correlation between the output gaps. But also a substantially larger deviation on average, they are on average about 2.3% output gap differences between the Green Book estimates versus the quadratic filter. And what I end up doing is as you increase these parameter lambda in the HP filter, I end up with filters anywhere in the range of about 25,000 and 55,000 for that lambda.

Which have better correlations with the Green Book estimates and also smaller deviations in terms of how far away the actual measure is from the Federal Reserve Green Book estimates. And I end up working with the 50,000 as my main specification. I could reasonably say anything between 25 and 55,000 would be kind of reasonable given what I have.

And I also, for robustness, think about the standard HP filter just in case, to make sure that my results are robust to that. And for the United States, I also look at quadratic, none of these things really matter in the big picture at the end of the day or similar.

I could also use the CBO upward gaps for the United States, again, not a lot of difference in the final results. So with that done, with the possible recommendations in real time constructed, I can then build the absolute deviations from the policy rule recommendations to the observed central bank rate.

A lot of these time periods are gonna have central bank rates at the zero lower bound or the effective zero lower bound. And so for that, I use shadow rates for the respective time periods to try to proxy for the true stance of monetary policy at the effective zero lower bound.

And then this gives me a measure of deviation from the policy rule at a given point in time. But rule-like policy, you typically think about as policy, that's consistent with the policy rule, not just for one quarter, but for a long period of time. That would be potentially, and I have two kind of ways to try to do this.

The first mirrors David Papell's and co-author's approach of structural breaks, so let me talk about that one first. So for that approach, I take this series of absolute deviations from the policy rule recommendations, and I run the Bai and Perron test for structural breaks to estimate when are there structural breaks, and then the number of them, and letting all of that come from the data.

So I'm not saying this period is rule like, this one is discretionary. I'm letting the data tell me, well, these periods, the average deviation was large and had a structural break there. These periods had lower deviations. The place where I do have to make some qualitative judgments is gonna be in terms of actually classifying these errors.

So I can kind of say, well, for the United States, there's these four structural breaks, five periods, but then I need to say, which ones of these am I gonna call rule-like versus which ones I'm gonna call more discretionary. And so the general rule approach I take for this is the highest deviation period for any country is gonna be discretionary.

The lowest period is rule-like, and the other ones are gonna be qualitatively classified. But I'm gonna use a lot of intermediate errors, which I'm going to classify as both in the test results. I'm gonna classify them both rules based and discretionary to show that it doesn't matter whether it's these intermediate errors, we think about them as rule-like or discretionary.

The results are gonna be robust to either specification.

>> John Taylor: This suggests there's correlation, highest deviation, right, so it's not just one quarter of high deviation or one quarter of low deviation, it's continuous.

>> Yevgeniy Teryoshin: So in this approach, it's saying that there's gonna be a 5, 10, 15 year period or which on average deviations were fairly low across that entire time period.

You might have individual periods when deviations are gonna be fairly high within that 5, 10 year period, but on average, deviations are either high or low. And then I'm classifying based on that average deviation. And hopefully when I go to the result, I'll convince you that the qualitative aspects of my classifications are fairly reasonable, but that'll be for you to judge once we get there.

In terms of evaluating economic performance, I use a bunch of loss functions. I'm not gonna go through all of these right now. The important things I would just say is that the first two are more linear loss functions that value inflation and output stability. And for this, I'm gonna use exposed data since that's our best measures of what actually happened, rather than real time data.

And another thing, I would just wanna emphasize is these last two loss functions. So I have a loss function that cares only about inflation stability and a loss function that only cares about output stability. And so that's going to let me think about whether rule-like periods, in general are associated with more output stability or inflation stability, or perhaps both.

I'm gonna be talking a lot about the fifth quadratic loss function later on. I also just wanna emphasize that this quadratic loss function only cares about output stability, it does not care about inflation stability at all. And then for the structural break analysis, the main comparison is gonna be what's the ratio of losses in discretionary errors to rule-ike errors across all of these specifications?

And so thinking about, well, kind of one statement I will be saying once I get to the results, is discretionary errors have twice the losses as a rules-like error. And so that would suggest that overall, for that particular specification, economic performance were about by twice or so during periods when this policy was less consistent with the policy.

So that's the structural break approach, the other approach is kind of fairly straightforward. It allows me to kind of use a little bit more of the data and also allows me to do Granger Causality test, so that's why I do it. But the idea is kind of quite similar in the sense that I'm gonna still be looking at deviations from the policy rules in absolute value, but I'm gonna think about kind of various measures of using all the data in terms of thinking about running regressions.

And I use three measures of deviations from the policy rule for this. One is just the single quarter deviation, necessarily take a lot of stock from that, but I can have one specification. And the two that I think are more relevant are the moving averages, either a three year moving average or weighted moving average of a 0.1 smoothing factor.

Both of which are just saying that over the past couple of years, three, four years, monetary policy on average had high or low deviations. And then I can estimate that same relationship I'm looking at in the analysis by running a simple regression, kind of getting me what's the correlation between these two things?

And Evan had a question, so I want to give way to Evan.

>> Evan: Yeah, this is kind of related to John's earlier question. In your discussion here, you keep referring to deviations as absolute deviations. And I was just wondering to what extent you had looked at whether it matters whether you have consistent bias in one direction or another, or alternatively, a white noise deviations from the policy rule prescriptions.

In other words, the consistency of the deviations in terms of direction. Is that something you've paid attention to?

>> Yevgeniy Teryoshin: So I have not paid attention to that, particularly in this paper. I do think kind of, it's an interesting question of is there some sort of non-symmetry in these effects?

And this does play a role in the results to some sense, in the sense that, for example, during the 1970s to the early 1980s, I'm gonna end up saying that that entire piece Period from around 1975 to 1985 is a single discretionary error. But in practice, the first part of that had absolute deviations where, sorry, the absolute deviations are always positive and large.

But up until 1980, Paul Volcker takes over. The deviations were consistently, the policy rule recognition would have been higher. And then after Paul Volcker takes over, the policy rule recommendation is lower. And so I classified that entire period as discretionary. However, those are kinda conceptually quite different discretionary periods.

One is too loose, one is too tight relative to policy rule, and it is interesting to think about that, I have not done so in this analysis. And so something potentially to look at, although given it took me about eight years to publish this paper, I'm not sure how much I wanna spend on that.

>> Evan: You could imagine a fed that was just sort of sloppy in implementing the Taylor rule versus a fed that was just systematically misreading the economy. Or ignoring the recommendations of the Taylor rule, and you might get different consequences.

>> John Cochrane: All right, thank you.

>> Yevgeniy Teryoshin: I would just say that, in terms of periods that there's definitely kinda periods where there's consistent bias upwards or downwards.

There's the periods that are gonna be discretionary are not gonna be periods where we're jumping consistently from large positive deviation, consistently large negative deviation. Those periods are that kinda white noise deviation is gonna generally end up being in the rules-based period. You just don't have that much jumping all the way from kinda the extremes of up and down during the periods that classify as discretionary.

>> John Cochrane: Could I ask, I mean, another thing that's gone on in this literature is the question of following the wrong rule. Clarida, Gali, and Gertler their analysis was not that it was discretionary, it was just the coefficient on inflation was too low to do. Do you think about that, or do you just consider that as one way of being discretionary and bad?

>> Yevgeniy Teryoshin: So all of this is gonna be relative to a particular rule. And if you're thinking about different rules in the analysis, it's gonna look like discretionary in terms of the policy rules. In terms of how do you, exactly. At the end of the day, what I'm saying is this is not discretionary in a technical sense.

I'm saying that these are periods that are consistent with the two policy rules I'm considering versus periods that are inconsistent with these two policy rules. And the time being consistent depends on, well, they could be following a different policy rule. They could be doing whatever, I don't really take a stand on that.

I would say that I played around with expanding the setup policy rules for a smaller sample, and it doesn't generally change the results too much. But at the end of the day, I kinda have to take a stand on what is rule-like to some degree. The other thing I do is I look at Granger Causality, where I'm selecting backlink with Schwarzenegger information criteria to see whether kinda I have some sort of any evidence for causality.

It's not, obviously, Granger Causality is not gonna be a perfect measure, but it provides us some guidance. And so I'll be talking a little bit about those results. Having said all that, let me jump into the results, since I've already used about more than half my time. So I'm gonna spend a little bit more time talking about the United States in terms of the results to try to give you a good feel of what I'm doing.

And then go through the other countries much faster, since I just don't have. I could spend 2 hours talking about all the results if I wanted to talk about all of them, I don't have that time. So this is the result of the structural break analysis for the United States with the original Taylor rule.

And with my preferred specification where we're using the HP filter with lambda equal to 50,000. And so we have a structural break around 1974, a structural break around 1984, a structural break in 2000, and a structural break in 2008. And then these numbers up top are gonna be the average deviation, these periods in the early 19, sorry.

In the late 1960s and early 1970s, the average deviation from the postural recommendation was about 1.5. During the 1975 to 1985, it is 4.5, the great moderation period has the average deviation of about 0.95. Then the early 2002 to an average deviation of 2, since the financial crisis, an average deviation of about 4.

So in terms of what I do next to illustrate the methodology in practice. The first thing I would say is that this period with the highest deviation of the 1970s to 1985, that's clearly discretionary. This period of the grade, moderation, lowest deviation, that's gonna be the rule-like period for sure.

Classifying the other three periods kinda requires some qualitative decisions. And so the final period, because it has a deviation of 4.5, sorry, 4.25, that's very discretionary, quite similar to the other discretionary period. I classify that as discretionary. The early 1870s period, I classify as rule-ike generally, anything with a deviation of less than 200 basis points.

I'm just gonna go with the idea that that's gonna be rule-like as a broad threshold. It's also broadly kinda much closer to the rule like period than the discretionary period. And then the early 2000s, an average deviation of 202 basis points, that, to me, kinda is harder for me to say.

That's really rule-like or really discretionary, it's kinda in between in terms of the absolute deviation. It's also kinda above what I would feel comfortable saying is really rule-like for this period. So I classify this intermediate as an intermediate period and then look at the results of the loss comparison.

Where this period can be thought of as rule-like discretionary or just forgotten entirely and making that comparison that way.

>> John Cochrane: Could I? I'm a little troubled by this, of course, cuz we would all like 1980 to be the breakpoint. But by classifying-based on absolute deviations rather than something percentage or R squared or so forth.

You kinda automatically take volatile periods for other reason and turn them into discretionary. I mean, a lot of what happened in the great moderation period is just nothing happened. If there's no shocks and nothing to react to and no inflation, no interest rate movement, the fed looks gorgeous cuz you can't deviate from something that isn't moving around.

Whereas when there's a lot of volatility, it's gonna be very hard to look rule-based by this measure. So I would think of something like deviation compared to how much volatility and inflation and unemployment there is in the first place.

>> Yevgeniy Teryoshin: I guess my first answer would be that I do agree with you, that probably have been a better approach.

And as I've been thinking more recently on it, that percent deviation or something like that would be something that would be probably an ease, a better measure. At the time that was not something that I thought about when I could have did all the analysis. I do agree that there is definitely a potential for reverse causality for the exact reason, that is definitely true.

And at the end of the day, the question am I providing something interesting here without accounting for that? That's a separate question. But I would agree that redoing the entire analysis the way you're suggesting would probably provide in hindsight, a better way to have done this. And something that potentially I would be worth doing.

>> Yevgeniy Teryoshin: So in terms of, let me actually skip the slide for a sec and just go to the results for a moment and then come back to that. And so, if you believe my classifications of the errors, this table shows us the losses in rules-based errors according to each of the loss functions in the single discretionary error, sorry, the two discretionary errors, and in the single intermediate error.

And what we see overall is that the measures are gonna be these. So these are the three periods lost for each of the errors, and then the other two columns, column four and five, classifying to meet errors either as rule-like or as discretionary. And so I'm not gonna take a stand on whether the early 2000s were rule-like or discretionary, I'm gonna allow for either version.

And then in the last three columns, I have the ratio of losses in indiscretionary to rule-like periods for ignoring intermediate errors in this first column. Considering those intermediate errors as rule-like in the second column, and then considering them as discretionary in this third column. And the main takeaway of this table is that regardless of the particular way I classify the intermediate errors, regardless of the loss functions.

The rule-like periods look better, with the caveat of what John Cochran just said about how we end up with the specification in the first place. And this is gonna be robust to other specifications of the Taylor rule, and the modified Taylor rule is gonna be robust to the other output gap measures.

So these results are gonna be robust across the board for the United States, even if the details, if we use the other specifications are quite different. So in this table, I provide a brief look at the other methods in regression analysis, and I find, for example, there's a broad trend that 1965 to 1974 generally looks rule-like.

And that's kind of the broad leader period, where we're still somewhat connecting the dollar to the gold standard to some degree. Then 1974 to 1984, we do have this period of first very high DD policy being too loose, then too tight, during which all that is being classified as a discretionary period across the various measures for the most part.

1984 through 2000, some of the measures don't exactly agree, but broadly speaking, that's gonna end up being rule-like, regardless of how I use output gaps, which rule I use. And then, since 2000, it's kind of went more towards discretionary. So then, towards the regression analysis for the United States overall, it's gonna be fairly consistent with what I just said in the structural break analysis.

The one thing that the regression analysis is gonna says slightly differently about is that there's a lot less robustness for the fifth quadratic loss function, the one that cares about output stability. Inflation stability is clearly related, at least we got causation, kind of in terms of correlation. But for the fifth quadratic loss function cares more about output gaps, that becomes a weaker result.

And in terms of Granger-Causality, I find clear evidence that losses Granger caused deviations and then deviations Granger caused losses from the original Taylor rule and primarily only for the policy, for the loss function measures that care about inflation. For measures that do not care about inflation, there's gonna be a lot less of that casuality.

And so the rest of the paper is kind of going through a bunch of other countries, and I do not have the time to go through all these countries. I will just briefly talk about one particular example in the case of, let's say, Canada, and then talk about the summary results in a moment.

So this is the result of the structural break test for Canada, we find that the first period where the Canadian Central Bank had a fixed exchange rate in the early 1970s, late 1960s, that was a rule-like period. Then we had a long discretionary period, and then a rule-like period since 1985.

And this transition here, the Bank of Canada adopted an inflation target in the early 1990s, but that inflation target had a transition period from 1991 to 1995, during which they were slowly moving the inflation target down. And so the structural break is right at the point of when they wanted to bring inflation to 2% 1995, that's the start of the rule-like period.

Whether that is because inflation is more stable at this point or because they adopted inflation targeting is something I cannot really distinguish in my analysis for sure. However, in terms of the Granger-Causality here, there's only causality from losses to deviations for Canada, there is no Granger-Causality from deviations to losses.

And so the Granger-Causality results, more generally, are quite all over the place, in a sense, sometimes there is, sometimes there isn't, and sometimes it depends on kind of the direction. And in terms of losses, kind of the standard result is gonna be here as well, that losses are gonna be lowest in rule-like periods relative to discretionary, regardless of how I classify this intermediate era.

So I'm gonna skip all the other country results unless there's any questions, the other countries I do use are UK, Japan, Italy, Mexico, New Zealand, Norway, and I think I'm in Australia is the one that I was missing. But in terms of what I wanted to talk about in terms of the summary results.

And so in this table, I try to combine everything I talk about here and a bunch of stuff that's in the paper that's not talked about yet and thinking about, well, what's the overall picture? And so over these two slides, I have the table that kind of shows the summary results.

So the first two columns are asking, in the structural break analysis, what's the number of the seven loss functions that have lower losses in discretionary eras to rules-based eras? And a few of these depend on the specific assumptions, but for the most part, for US, UK, Australia, Canada, across the board losses are higher in discretionary periods than in rule-like periods.

And then for the other countries, that's also true for Japan, Italy, Mexico, mostly New Zealand, but not Norway, kind of, Norway is the one exception to all of my results. Where in Norway, there's either no relationship or potentially, Norway might actually be doing better in periods when monetary policy seems less rule-like.

And Michael Berto, did you have a question?

>> Michael Bordo: Yeah, so if you go back to the Canada slide, cuz the interesting thing is that, so, yeah. So the middle slide, where it's 215, okay, so that's a period when actually they were following a rule, but it was the Friedman rule, okay.

So, they were following money growth targets, and they were lowering their targets, okay, and they abandoned it because they said it didn't work, okay. So I mean, you talk about a Taylor rule, but in a sense, they were being rule-like, but it wasn't very good.

>> Yevgeniy Teryoshin: But again, my approach is kind of relative to the Taylor rules, which is a limitation of this approach.

So the other thing I wanna just briefly talk about is the regression analysis, kind of in terms of the results. And so here, the pluses represent a positive relationship, and the minuses represent a negative relationship. And the main point I wanna say is that if we look across these two slides, is for everyone except New Zealand and Norway.

So for seven of the nine countries, there's a fairly consistent positive relationship of periods with higher average deviations have higher losses according to all but the fifth quadratic loss function. And the fifth quadratic loss function, sometimes there is no relationship. Sometimes it's positive and then for places like Canada, it's negative.

Rule-like periods, at least according to these two Taylor rules, actually ended up having generally worse output stability than more discretionary periods relative to these rules. And so that kind of gets to my general result that rule-like policy seems to have a much stronger relationship with inflation stability rather than output stability.

Because the output stability results are all over the place, sometimes positive, sometimes none, sometimes negative, depending on the country that we are looking at. And so, in terms of the panel data results, the approach is kind of very similar. I can't do the structural break analysis because that part isn't transferable.

But the regression analysis and the Granger-Causality tests are and so I'll briefly talk about those. In terms of the relationship, what I'm running is essentially that same regression with fixed effects for countries and years. And for the causality testing, the main specification I'm gonna be talking about is the Dimitrescu and Hurlin Granger-Causality test.

Which is a test where the null hypothesis is homogenous non-causality between the variables. And the alternative is that there's gonna be some set of countries for which there is Granger-Causality. And so let me briefly talk about these results.

>> John Cochrane: Before you go on. I'm a little worried about regressing losses at T on deviation at T, because, of course, a big output shock is gonna make you look like a deviation and like a loss.

I would have thought at least I don't wanna get into identification, but at least like a lag that deviations at T would cost losses at T plus one. At least, so it isn't just automatic that a big output shock leads you to both a loss and a deviation.

Kind of two things, one is that these deviation measures are kind of weighted averages of a period of time. And so they're not just that period. The second thing is, I did have that in a previous version of the paper, and the referees argued that that is not worthwhile to have.

My condolences. But no, explaining that it's average over time helps a lot.

>> Yevgeniy Teryoshin: Yeah, so these are typically kind of the main specification, this is the three year moving average of the deviations. So let me get to the results, these are the broad kind of lots of numbers.

I don't wanna, maybe would have been better to kind of emphasize a couple of them. And I'll just do so verbally, even if I did not emphasize them quite as much as I should have graphically. So the first thing is for these first two loss functions. These are loss functions that care about the absolute, the deviation of the policy rules, sorry, of the inflation and output from their objectives, just in their raw values.

And so what we see across the various specifications is that a 100 basis point increase in deviations from these policy rules results in. Or is associated with about 50.5 choose 0.8% joint movement of inflation and output away from their targets. That's the overall magnitude of this relationship. And then the other thing again, the fifth quadratic loss function, depending on the specification, there is no effect.

And so again, that relationship for output stability is much, much weaker rather than inflation stability. For the Granger-Causality test, these are the key values for the null hypothesis that deviations homogeneously do not cause losses. So if we reject the null, that means there's some set of countries for which deviations do Granger cause losses.

Again, a very similar picture that, generally speaking, most of these values are gonna be less than 0.1. Most of these are less than the 1% requirement for the cutoff, except the fifth quadratic loss function. So again, that relationship for inflation stability seems to be there in the Granger-Causality data for at least some countries for output stability, that relationship does not seem to be there.

Now, another question you might ask me is why do I do this test where I'm running this null hypothesis of deviations homogeneously do not cause losses? Why do I not test for is there homogeneous Granger-Causality rather than homogeneous Granger non causality? And the answer to that is essentially, I've done the other version as well.

And the results vary depending on the assumptions I'm making. So if I were to assume that, if the assumption I'm making is that I'm asking the question of do deviations Granger cause losses homogenously with the same relationship for all the countries. I tend to find in favor of that, if I allow for different degrees of Granger-Causality across countries, I tend to reject the null hypothesis.

So with the homogeneous test, the results are very specific to deep modeling from. And so let me wrap up by talking about.

>> Steve Davis: There's a question, okay, so first comment. I think your terminology here is really a barrier to clarity and thinking and communication. So I'll just recap some points that were made earlier.

Both Mike Bordo and John Cochrane made the point that just because you see big deviations doesn't mean they're not following a rule, okay? So deviations don't imply discretion, okay? That's the summary of that point. John Cochrane also made a version of the point, which I'll state somewhat differently, that just because you've got small deviations doesn't mean that the Central Bank is following a rule.

John referenced the grave moderation period but the broader point is just because you see small deviations, you can't infer that it's a rule. So I really think you ought to drop the terminology you've got now. What you are telling us about is the extent to which outcomes are consistent or inconsistent with the particular rules you're considering.

That's just a much more useful way to talk about what you're doing. Second thing, a very different comment now, I think what would be really interesting is to look at the deviations. There are the inconsistencies from whatever rules you consider across these countries, and you've got one, two, maybe three at most for each country.

So I don't wanna see some fancy time series econometrics, I wanna see an explanation of each episode. There's a small enough number that you ought to be able to give us a narrative interpretation of each episode. And when I think about what might be going on, three things come to mind.

The first is kind of by way of an analogy to incomplete contracts. There are shocks or circumstances that arise that are so unusual that there's an agreement among many that we ought to deviate from a previously specified rule. Maybe the pandemic was of that category. I know it's not in your time period, but if you go back to the oil price shock that was at the time regarded.

As an extraordinarily unusual development, it caught many economists flat footed, some famous ones thought that the oil price shock would soon unwind. There was a lot of confusion about, well, to what extent was the oil price shock associated with a productivity slowdown and so on. So that's just one possibility, another is that there are political pressures on the Central Bank that undermine either its independence or its credibility.

And that`s what leads to a departure from a rule. And a third, maybe somewhat related, is there's a big giant fiscal shock, never have those, in which the monetary authority either decides or is compelled to accommodate, and that's what leads to inflation. So I'd like to see you take the analysis in which you identify these episodes where you've got these big departures from well established rules.

And then explain them in a narrative fashion rather than in some time series econometrics set up where we're gonna. I don't see that as a productive path in this particular setting.

>> Male Speaker 1: I'm sorry, I would add that financial stability is something that most central banks care about. And if we look at Argentina, they had the ultimate rule.

We will have one peso equal $1. We will never deviate from that, they said this multiple times, and it lasted for a bunch of years. And then when the banking system got into trouble, it deviated from its rule. It dropped the peg. That seems to be what going on now in the United States is that concern for the financial stability of the banking system is something that is causing the Fed to rethink how fast they should be raising rates.

And the raising rates, the rule, the Taylor rule that you're looking at, doesn't say raise rates gradually. And that seems to be something that people find systematically when they look at Taylor rules. The lagged interest rate is always important in the process. And my understanding has always been that that is because they didn't want to do things too rapidly because of financial stability considerations.

>> Yevgeniy Teryoshin: So let me respond to that in as much as I can for this moment. But I do agree with the broad points. So, first, regarding to the, how I'm calling this, a lot of that was based on kind of how the kind of literature here does this.

I do agree that the other kind of what I'm really doing is saying is policy consistent with these policy rules. And perhaps that the way I'm choosing to phrase that is not necessarily helpful. That is what the previous literature adopted and so that's what I was following. But I kind of, I mean, the previous literature actually said rules based, which I try to at least get away from rules based to rule-like.

Which kind of captures a little bit of that idea that this is more, are they consistent with a particular policy or rule or not? But I do agree that's what I'm really doing. And perhaps that might be a better way to frame it, in terms of an actual kind of argument of what's going on in each of these.

So in the paper I do provide some of these things. So for example, one of those things you were mentioning is Central Bank independence. If we go to, say, the Italy case. Italy case, kind of the discretionary period, when Italy was the residual purchaser of all of the government securities, was the discretionary period.

The remaining period, that looks a little bit more rule-like is the period once they had some Central Bank independence. And so in the paper, I do try to at least for the, my main specification, provides some of the arguments that you seem to be asking for about what's going on in each of these periods.

But I guess in terms of the big picture, at least for the way I was thinking about this paper, is it's really hard to have a very specific argument on these sort of things. Because all of the specific structural break dates are gonna depend on all the modeling assumptions on what is the output gap, what is inflation, what policy rule am I thinking about?

And so it's hard to necessarily have this, the structural breaks are gonna depend a little bit on those. And so it would be a completely different paper, perhaps a better paper, perhaps not. If I were to kind of choose a particular policy rule and really get into what's causing those deviations, what's causing those errors, as you're suggesting.

But I would essentially have to end up taking some sort of stance on what is the right rule for that Central Bank.

>> Male Speaker 1: Could I-

>> John Taylor: No, no, no. I wanna follow up on Steve Davis, because those were great comments. I think you need something like R squared.

You wanna know, when inflation and output vary, does the Central Bank raise interest rates on average the way it should? So you wanna see it responding, whereas the current measure, you could have all the PZ equal to zero, but you just didn't have much movement going on. So R-squared, something like that says, are you following the rule?

And then how closely are you following the rule? And I think that would help, the other is, I wanna straight halfway between Steve and what you've done. I think the ideal thing combines econometric analysis with historical narrative. So the econometric analysis is, yeah, they start following a rule and the historical narrative says, yeah, they said they were starting to follow a rule, which is helpful.

In that regard, though two way Granger-Causality is an advantage for you. That's something to be proud of, not embarrassed of. Think of Canada, why did they implement an inflation target? Because they were getting all sorts of wild stuff under the previous regime. So clearly the big volatility caused them to adopt an inflation target.

And in our reading of history, that settled things down. But you should see a Granger-Causality from volatility caused the inflation target. Same with the US, why did the Fed start doing things right in the 1980s? Well, the 1970s were pretty unpleasant, that caused the change in policy. So reverse causality, or two way causality, is entirely a great thing.

Central Banks who just wake up and exogenously say we're gonna do something different, it's usually a bad idea.

>> Yevgeniy Teryoshin: Let me just briefly conclude before we continue to all the, since I think we're in the question and answer period, so let me just briefly conclude on what I was saying and then I'll open up.

So in terms of the big picture of this paper, with the caveats, especially that John Cochrane has been kind of talking about, am I really capturing the right thing? But in terms of what the results are, given those limitations, for seven of the nine countries, there's a clear relationship where periods that are more consistent with Taylor rule-like policy, either original or modified, have lower losses.

And the most of that is coming from better inflation stability in periods where policy seems more rule like, and the Granger causality things I already talked about. So I suppose, Andy, go ahead.

>> Andy: Yeah, so the question I have is your whole narrative today didn't really mention anything about the great financial crisis.

And it's right smack dab at the end of your intermediate zone for the United States. States it's not apparent in any of the other countries and so one has to wonder what the underlying assumption is that is going on here. Is it that monetary policy doesn't have much of an impact in terms of these rules on financial stability?

And the great financial crisis was simply a meteor kind of hitting the earth just completely out of the system? Or is there something else going on which is the systematic deviation during the intermediate period where monetary policy, not just in the United States, but not just in the countries we're looking at.

But at countries across the board, Latin America, Asia, other places, were following policies that consistent with Taylor rule, not even close. Monetary policy was too easy compared to those rules. And the argument was that you had insufficient emphasis on financial stability in monetary policy rules where imbalances were growing in the economy as interest rates and inflation remain low.

And then that was gonna ultimately lead to a big financial and economic loss and it did. But you don't see that at all in your analysis. Like the economic losses don't seem to be particularly large. They don't seem to produce particularly large changes in the policy rule coefficients according to your breakpoint test.

So I'm just wondering, what are you thinking about in terms of that period? Is there a way to capture the losses that were implicit in the great financial crisis? And can you link it back somehow to the systematic deviation of policy rules, all the one sided errors in those rules that were being followed at the time?

>> Yevgeniy Teryoshin: I guess the first part about why it's so problematic to do so is if we go back to the very, very first slide I showed you, was policy actually inconsistent with policy rules in the period prior to the financial crisis? John Hitler would argue that it was, Ben Bernanke would say that it's not.

And so that period of the mid early 2000s across both the United States and a lot of other countries. It seems to be kind of somewhere in between, for the most part, between really discretionary versus very rule-like. And it really depends on the specific assumptions you're making. And so I don't think this analysis necessarily has the capability to really address the question you're asking.

And if it's that early 2000 period is just kind of too, there's no robust result whether or not that period was actually consistently away from policy rules. From my analysis I don't have any way to say that that period was particularly away from the policy recommendations or consistent with those policy recommendations at all.

>> Andy: But like what Steve was saying, if you think about the narrative that you might wanna place in this period, you would say, okay, great financial crisis happens. I look at these two rules you have up there, the original Taylor rule and the Bernanke rule. You'd say, that's one for John and one against Ben.

>> Yevgeniy Teryoshin: I would agree with that to some degree, I guess the point of this exercise is more of a to try to not be too narrative driven, in a sense. And so another way to say this, another way to interpret the results would be, if we go back to the United States.

If you want it to be kind of very going the other direction is to say that what really is happening here is that the entire 1984 period through 2007 is one broadly consistent with policy rules. And then suddenly, there was a shock to the world, hit the world.

There was a large recession, that recession kind of forced the policy away from the rules because there's large deviations then. I can make narratives in both directions. I can't really distinguish which of those narratives is the correct narrative in a sense. David, did you have a question?

>> David: Okay, so, first of all, not surprisingly, there's a lot about this paper that I like a lot, will won't surprise anyone.

I just have a few small points, one is on the output versus the unemployment gaps. We did have some work in our 2021 paper, policy rules and economic performance. That used the real time unemployment rates that Bob Gordon did in his series of textbooks starting in 1978 that Evan Koenig assembled for his work.

And it really doesn't make much difference whether you use unemployment gaps and output gaps, which is like what John Taylor said. On the HP versus quadratic detrending, our results are only for the 1970s on that. And so there's no conflict between that in our work and your work.

On the issue of deviations versus an or absolute value of deviations, we have a paper called the Taylor principles. And that one, we use these same types of econometric techniques to look at the deviation. It's not just the size and there you actually get a break. In 1979, you get the distinction between the great inflation and the Volcker disinflation.

And the Volcker disinflation period was the only period, as the deviations were, the actual policy was above the rule. But in that paper, purpose of that paper, we estimated these rules and found not that different from a lot of other people. That the coefficient on inflation was not significantly different from one in the great inflation, in the great inflation period, but the coefficient on the output gap was significantly different from zero.

During the Volcker disinflation, it was the opposite. And the great moderation, they were both significant in the way you would think. So I might take a little issue on saying about having a coefficient on inflation that's not different from one could be consistent with the rule. Because this would be a rule that would give you indeterminacy in inflation.

And so I'm not sure that I would have trouble calling that a policy rule where you don't actually have inflation. The policy rule wouldn't give you inflation determinacy. And on the causality part, we have some in that 2021 paper where we looked at losses for six and eight quarters after the changes from the higher low deviations.

And that was the best that we could, it's not perfect. That's the best we could think of doing in terms of thinking about causality. Finally, in terms of inertial rules, we have a paper, paper now that's paper that's almost. I could say, an ongoing paper, policy rules and forward guidance following the COVID-19 recession, that there are deviations.

We look at deviations from inertial and non inertial rules from a whole bunch of policy. Rules cause the deviations from the inertial rules are smaller, smallest from now going forward. Looking at SEP projections, what we find is that the inertial Taylor rule fits really, really well for projected Fed policy, again, using the December 2022 SEP, although we will update it for the March 2023.

And that to the extent that we put out an Econbrowser post about a month ago with the title the Fed is following the Taylor rule.

>> Yevgeniy Teryoshin: I think David makes some good points. The only thing that I think actually requires a response, most of those were just comments that I think are useful and informative.

The one thing I would just say is that, in theory, there's nothing to say that rule based policy has to be good. In a sense, if a Central Bank wants to follow a policy, that is, model results in indeterminacy, and if it's still really following that rule. In a sense, that is rule-like policy, we don't necessarily have to say that a coefficient less than one on inflation is not a rule.

To me, if you're following the rule, it's still a rule. However, in terms of what I wanna be analyzing is probably not the most relevant force, this sort of analysis.

>> John Cochrane: And if you're gonna bring that one up, the coefficient less than one leads to indeterminacy, is in new Keynesian models with a passive fiscal policy.

Other models don't have that result. And if you need a coefficient greater than one to get determinacy, then it's not identified in sample and you don't observe a coefficient greater than one anyway. So that's a whole sneak pit of problems.

>> Yevgeniy Teryoshin: The one thing I would just say kind of on that broad general trend is, so in one of the earlier versions of this paper, I did this analysis with estimated Taylor rules as well as the ones that I have here.

A lot of those estimated Taylor rules, and not a lot, but some of those estimated Taylor rules ended up with coefficients less than one as the estimate, although kind of identifying that estimate properly is problematic. But if you do this analysis for some of these countries with these Taylor rules that have coefficients less than one, the broad results are gonna be generally similar, but they're gonna be much weaker as well.

But again, I've not really thought about systematically thinking about having central banks following those policy rules with lower.

>> John Taylor: So one thing that many of these questions are asking about is, why focus on this one version? Why not have Milton Friedman's rule, David talked about that. Why not have fiscal policy?

Why do you focus on this particular rule? Why do that?

>> John Cochrane: Strange question for you to ask, John.

>> John Taylor: Choose one that's better. You should say, which one do you wanna use? You have to start your research somewhere. I mean, Steve says there was political pressure on the Central Bank, that may be why they deviated.

So I think somehow this, you choose a rule, you look at the differences, is one way to go when there are other ways to do it. Sure, I mean, this seems to make constructive. I mean, the Fed has published these rules and its reports, they all know about it.

They don't know about all these other things, so it seems to me it's a reasonable place to begin. And your results, I mean, as far as I can understand them, you didn't talk about the other countries that much. Maybe you could do that in a couple of minutes, but that's somewhat convincing to me.

I think that's what you have to say is it's out there talking about, the Fed's talking about, other Central Bank' s talking about, let's look at this. That's what I'd say.

>> Yevgeniy Teryoshin: I guess my one response to that would be that technically, if I were to take that approach, I should probably focus more on inertial Taylor rules.

That is the rules that central bank tends to at least emphasize at present. So perhaps, and I mean, the analysis could easily be redone with inertial Taylor rules as long as I chose a particular value for that inertia. In terms of the other countries, I did not really talk too much about them beyond saying that the results are broadly similar.

In the sense that, at the end of the day, when we look at the deviations in rule versus discretionary periods across the board, except for Norway and New Zealand. When I'm using the HP filter with lambda equals 1600, the results are the same across Mexico, Italy, Japan, UK, Australia, Canada.

All the other countries except for Norway and New Zealand with this other filter end up with the same final picture of saying that periods with more rule-like monetary policy relative to these two policy rules, ends up having lower deviations. In the paper, I go through kind of the specific values, so we already talked about Canada for a little bit.

And so we had that period of fixed exchange rate, then we had no monetary targets, then we had monetary targets, as Michael Bordo mentioned. And then this was largely a period where there was a transition to inflation targeting, followed by inflation targeting of 2% since 1985. These were the structural breaks I estimated kind of in my perspective relative to these policy rules.

This period, that is rule-like relative to the rules that Michael Bordo was mentioning, is not rule-like really relative to the policy rules I'm considering. So I can think about this entire time period as one long discretionary period in my preferred specification. And then if we go to the specific details of the loss function analysis, let me jump there.

I would end up with kind of a table that looks a lot like the table I showed you for the United States, where rule-like errors have the lowest deviation. Discretionary errors have the highest losses, and intermediary errors are, in this case, either as bad as discretionary or about the same.

But regardless of how I classify this intermediate error, I still end up with this general picture of periods that are discretionary are gonna have losses from one and a half to about 17 times higher. And I could give you a bunch more of these pictures if you want, John.

The paper has a lot of these pictures, but they end up being kind of quite similar. So that's Canada, Australia has kind of a very similar picture, loss is substantially higher. United Kingdom same picture, the only exception to that is gonna be the Norway case.

>> Male Speaker 2: Could I just second what John Taylor said and John Cochrane said a moment ago about exploring some other things?

Another natural thing to do would be to look at this from the standpoint of what the central banks were saying they were trying to do. For example, some of these were exchange rate targeting or money supply targeting and things of that sort. I mean, it's interesting to say if they had followed a Taylor rule back before the Taylor rule was invented, they might have done better.

But it seems to me that would be another thing that would be of interest just to see if you could figure out how consistently following what they claimed they were doing, number one. And number two, I think it would be interesting for a monetary and fiscal history as well.

>> Yevgeniy Teryoshin: I think that's a good point other things in a similar way.

>> John Taylor: Another small point is these countries are not completely separate, they're related.

>> Yevgeniy Teryoshin: Yeah.

>> John Taylor: And their actions of the ECB may be affected by the actions in the US and Japan, so maybe that's the next step.

How do you incorporate that correlation a little bit, the different philosophies of Central Bank?

>> John Cochrane: If I could add, we had a great seminar here by Carsten's a while ago, talking to us about Central Bank of Mexico, who said, look, you guys, we love your Taylor rule. But for Mexico, we pay attention to the exchange rate and we pay attention to the US federal funds rate, cuz if we don't, we get trampled by a herd of elephants.

So kinda natural to think of a small open economy not following a pure Taylor rule, but paying attention to those variables in fine rule-like fashion because they got to.

>> John Taylor: Any other points, questions?

>> Yevgeniy Teryoshin: One last I'll just respond briefly to John in saying that I guess to some degree you have to make some choices in terms of what are the rules I wanna be thinking about.

And to me at least, it's interesting that even countries that would not necessarily just follow something like a Taylor rule in an optimal setting, in an optimal setting. They should be caring about exchange rates if the economic performance tends to be consistently better in rule-like periods, that is an interesting result of itself.

One possibility kind of that during this process that I was thinking about that referees were suggesting, he's thinking about more specific policy rules for particular countries, such as introducing exchange rates, introducing inertia. But at some point, I need to kind of make some assumptions, kind of make some choices, and these are the choices that I ended up making kid of.

And I'm not sure how much scope there is for similar papers that make slightly different assumptions on that.

>> John Taylor: Thank you very much Yevgeniy, appreciate it. Thank you, and virtual audience.