- Energy & Environment

- Politics, Institutions, and Public Opinion

- State & Local

The recent dramatic events in Texas are an early warning sign of the disasters that are likely to occur if the Biden administration continues its relentless effort to demonize the use of fossil fuels in the effort to combat climate change.

Assessing whether the climate is really changing requires looking at two numbers. The first is mean global temperatures across time. While that figure is increasing overall, it shows a complex up-down pattern that cannot be explained solely by the steady increase in carbon dioxide emissions. The higher the mean temperatures, the worse the supposed problem.

The second measure, though often neglected, is every bit as important: the variance in temperatures, whether measured in days, seasons, or years. A lower variance over a relevant time period means less stress on the power grid and other systems, even when the mean temperature increases. The general trend is that the variance in the temperature has gone down over time. Even today, for example, a large fraction of the record high temperatures in the United States took place in the 1930s—when carbon dioxide levels were far lower than they are today—with only three record highs after 2000.

To give a simple example as to why variance matters, assume there is a five-mile span of railroad track that faces, on average, a 1 percent upward grade. In general, that grade means that ordinary locomotives can navigate the slope with only a minimum loss in efficiency. But the picture could change once variance is taken into account. For instance, assume that for four miles the grade of the railroad is zero, but for one mile the grade is at 5 percent. Although the average upward gradient is still 1 percent, the railroad may well become unusable by long freight trains because they won’t be able to get over the hump once the grade reaches even 1.5 percent. Engineers carve out mountainsides and build tunnels to allow those trains to operate without facing extreme grades. The same argument applies to potholes in roads. A car can easily negotiate a large succession of little bumps, but might well crash if it encounters a deep gap in its path.

The importance of variance is well illustrated in connection with the ongoing catastrophic situation in Texas, where a severe and unexpected cold spell led to the collapse of the energy grid. As the temperatures dropped throughout the state, the strains in the grid rose exponentially. This massive failure left millions of people without electricity and heat for days, causing many dozens of storm-related deaths, if not more. The state has been declared a disaster area, with federal aid set to pour in and state Attorney General Ken Paxton poised to investigate the ill-named Electric Reliability Council of Texas (ERCOT), which is charged with ensuring that electrical power gets where it needs to go throughout the state.

The situation in Texas has raised the blame game to new levels. What caused the power failure? Paul Krugman has taken his familiar stance in the New York Times that the fault lies with corrupt conservative state politicians who have systematically refused to order the additional of public and private funds to harden the grid. Ironically, those very conservatives placed too much reliance on wind energy and made too little investments in natural gas, leading to the current problem. Krugman wrongly accuses the usual suspects—Republican politicians and the right-wing media—of advancing “a malicious falsehood . . . that wind and solar power caused the collapse of the Texas power grid.” He then skews the analysis by claiming that shortages in natural gas are the source of the problem, while extolling the use of cheap wind power, which Texas has in abundance.

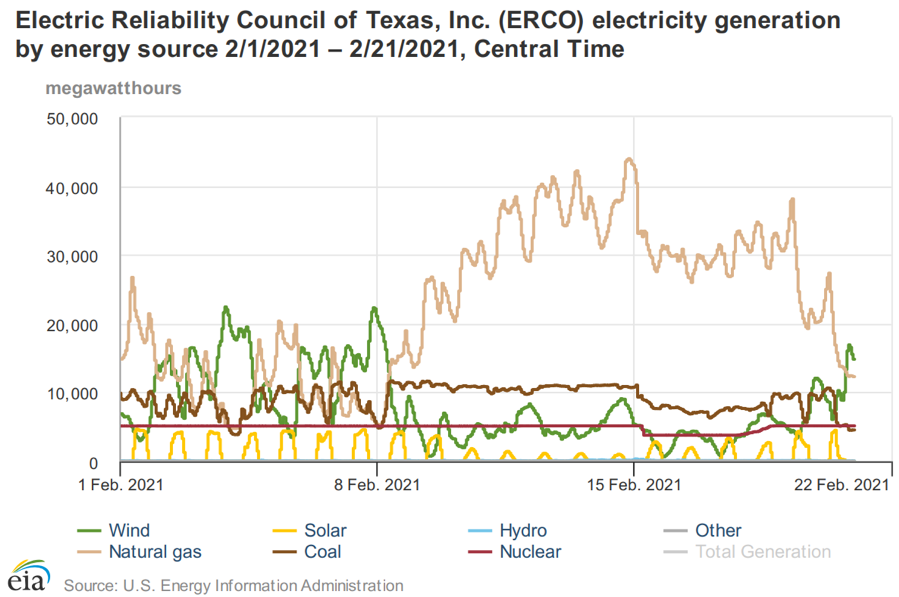

Indeed, the state has long relied on a huge installed base of wind energy, which, before the current power outage, was lauded on the ground that it has “saved twenty billion gallons of water, avoided nearly thirty-nine million carbon dioxide emissions, and powered more than five million homes during 2016 alone.” Strong winds can do wonders in good times. But a failure analysis of detailed data from Texas, conducted by the US Energy Information Administration, tells a different story. Consider the following graph of electricity generation by source in Texas across the month of February. The graph clearly shows that the contribution of wind to the power supply dropped precipitously while the increases in coal and natural gas were unable to make up the shortfall.

Thus, when critics of fossil fuels like Krugman point to the breakdown in the natural gas system, they overlook the fact that the overall performance of natural gas was far superior to that of wind. Indeed, when the Center of the American Experiment, a Minnesota think tank, rated the performance of various energy sources during the Texas outage, nuclear came in as the most reliable, performing at 74 percent of total capacity; coal and natural gas were at 39 and 38 percent respectively; solar was at 24 percent; hydro at 18 percent; and wind dead last at 12 percent. Reliability is an especially important aspect of the provision of energy, given the potential for high variance weather incidents such as sudden drops in temperature that necessitate large spikes in energy needs.

The key lesson, therefore, is to figure out how to toughen the grid against stress, rather than to create an energy grid that can provide a certain average amount of output. Wind (and solar) power are both hopeless for the task of being the primary energy source. It may well be that coal is more reliable than both natural gas and wind, but it is surely the case that the natural gas component (which ramped up fourfold as the wind power died in Texas) is far more reliable than wind. Any move to greater reliance on wind power, then, is the surest road to break down if the energy grid is subject to stress from extreme weather conditions. It is a lot easier to protect one pipeline from the cold than thousands of individual windmills spread across a vast landscape.

There is another important lesson to learn about failure analysis. The diehard opponents of fossil fuels do not distinguish sufficiently among various forms of fossil fuel. Nor do they make any efforts to figure how to make incremental improvements to the systems now in place. Instead, they claim that each and every failure is a function of global warming, and adopt institutional responses that lead to major environmental hazards. It is not the tiny year-over-year increase in global temperature change that explains, for example, why much of Northern California erupted in flames in 2018. The true cause was a concerted decision to retreat from sound practices of forest management that allowed deadwood kindling to accumulate on public lands, which then stoked the deadly blazes that followed. As a general rule, we must first think locally about the origins of adverse effects, not globally. The catastrophes in Texas and California are not satisfactorily explained by global warming and/or climate change.

Yet this lesson seems lost on the Biden administration, which has further constrained the use of fossil fuels by rescinding a Trump-era guidance on the matter. That guidance suggested allowing federal agency environmental review only for projects with high levels of greenhouse gas emissions. The question here is not whether to regulate greenhouse gas emissions, but how best to undertake this task in ways that achieve the desired level of emission reductions at the lowest cost.

Jomar Maldonado, the associate director for NEPA in Biden’s White House Council on Economic Quality, claims that the Biden decision ensures greater certainty and a “firmer legal and scientific footing.” But he is wrong. Additional review only increases uncertainty, raises costs, and obscures the basic science. The clear intention of this latest action from the Biden administration is to build in higher costs, such that power companies will be reluctant to invest huge sums in pipeline construction that can be delayed or denied with the stroke of the pen, which happened last year to the Atlantic Coast Pipeline when “legal uncertainties” derailed a huge project that would have displaced more inefficient modes of transfer.

As I wrote in a study for ConservAmerica in March 2020, the Trump guidance is especially needed for pipelines. Pipelines are designed to transport crude oil or natural gas from one place to another, and leakages from these facilities, usually buried deep underground, are tiny, so there is no dangerous interactive effect between the tiny emissions from different pipelines. The emissions that arise with fossil fuels occur with refineries and other downstream users. Yet the game of the Biden administration is to attack fossil fuel production at every single vulnerable point. The necessary, and intended, consequence of that strategy is to encourage energy producers to follow the Texas lead by relying more heavily on wind and solar energy—both of which work well until they don’t.