- Budget & Spending

- Law & Policy

- Regulation & Property Rights

- US Labor Market

- Energy & Environment

- Politics, Institutions, and Public Opinion

- Health Care

- Economics

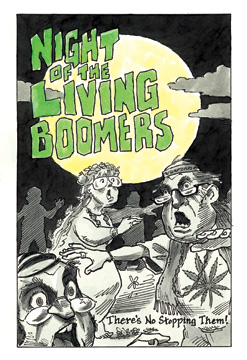

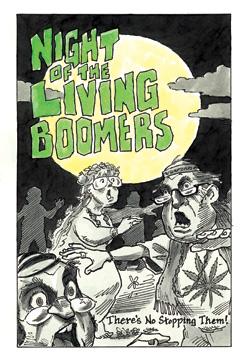

There is a looming catastrophe stalking the developed world. It promises to devastate the global economy, overwhelm hospitals, and decimate armed forces. What is the calamity that promises such misfortune? Not a killer virus, deadly terrorist attack, or natural disaster. It’s the aging of the world’s baby boomers, the coming tidal wave of senior citizens who will live longer, consume more, and produce less, seriously challenging societies’ ability to care for their graying ranks.

Those, at least, are the dire warnings. Alarming forecasts bombard us about an impending demographic crisis in the United States, Europe, Japan, and even China that will reshape the way we live and work. In just two decades, we’re told, there will be more Americans 65 and older than Americans younger than 15. By 2040, at least 45 percent of the populations of Spain and Italy will be 60 years or older. That same year, China will have 400 million elderly citizens. And in Japan, which is aging faster than any other country, more than 40 percent of the population will be elderly by the middle of this century. The fiscal burden of supporting this rapidly expanding segment of the global population threatens not only to bankrupt national health care systems and shrink armies, as countries’ median ages run past military age, but also to revolutionize electoral politics, with political clashes no longer governed by right versus left but by young versus old.

If it sounds distressing, it shouldn’t. Those gloomy projections are deeply flawed because of the misleading way in which we measure age. Typically, a person’s age has been determined by the number of years since birth; we are so accustomed to measuring age this way that most of us never give it a second thought. Thanks to the medical revolutions of the past century, however, life expectancies have been radically prolonged. Since 1960, the average Chinese person’s life span has increased by 36 years. During roughly 40 years, South Koreans have seen their lifetimes extended by an average of 24 years, Mexicans by 17 years, and the French by nearly a decade. Given these drastic changes, our conception of what qualifies as “old” has itself become old-fashioned.

Measuring age by years since birth is just as foolish as using the dollar as a timeless unit of value. For instance, no serious economist would compare per capita spending in the United States in 1960 (when it averaged $1,835) with 2006 (when the average American spent about $31,200) and conclude that spending has increased seventeenfold. A 1960 dollar and a 2006 dollar are simply different units of value. Adjusting for inflation, we discover that average per capita spending approximately tripled between 1960 and 2006, when both figures are measured in constant purchasing power. In other words, the results are not nearly as drastic when the proper figures are compared.

Just as with the dollar, it is time to introduce inflation-adjusted ages. The best replacement gauge is mortality risk: the chance a person has of dying within the next year. The higher the mortality risk, the “older” a person is. It’s a measurement that reflects much more accurately a person’s health, likely productivity, and remaining life expectancy.

When the U.S. Social Security system was designed seven decades ago, the 65-year mark was deemed the moment when Americans moved “beyond the productive period” and into dependency. That age was chosen based on mortality risk: a 65-year-old man in 1940 could expect to live an additional eleven years, a 65-year-old woman an additional fifteen years. But medical advances have shifted mortality risks enormously. When an American man hits 65 today, his mortality risk is just 2 percent; he can expect to live nearly seventeen more years. He has the same risk of dying that year as a 56-year-old man had in 1940 or a 59-year-old man in 1970. In other words, a 65-year-old today and a 59-year-old in 1970 have the same “real” age. The effect with women is similar. A 70-year-old American woman today has about the same mortality risk as a 65-year-old woman in 1950.

The implications are significant: the magnitude of the elderly wave is, in reality, far smaller than demographic forecasters have predicted. Forecasts today tell us that the fraction of the population over the age of 65 will grow enormously. But consider what would happen if we replaced the 65-year marker with a mortality risk measurement that governs who is considered “elderly.” In 2000, 12.4 percent of the U.S. population was over the age of 65, or about 35 million people. The U.S. Census Bureau predicts that by 2050 the U.S. elderly population will have grown to about 87 million. But if we look instead at the fraction of the population with a mortality risk higher than 1.5 percent, the growth is not nearly as dramatic. By 2050, only 62.5 million Americans, or about 15 percent of the population, will have a mortality risk greater than 1.5 percent. That’s hardly a demographic tidal wave. The global outcomes are similarly striking: a mortality-based measurement lowers the projected elderly population in 2050 in Japan, Spain, and Italy by an average of 30 percent.

Just consider the consequences of altering the age at which entitlement benefits kick in or retirement becomes mandatory to these new inflationadjusted measurements. It doesn’t mean shortening retirements, just stabilizing them. In twentieth-century America, the average length of retirement grew from two years to more than nineteen years. As life expectancies continue to rise, retirements will continue to get longer—and the pension bill far larger. If benefits and retirements are governed by mortality risk instead of age, the costs will be far more manageable. We have witnessed dramatic improvements in life expectancies over the past century. It’s time we dramatically improved the way we measure age as well.