The Hoover Institution Center for Revitalizing American Institutions webinar series features speakers who are developing innovative ideas, conducting groundbreaking research, and taking important actions to improve trust and efficacy in American institutions. Speaker expertise and topics span governmental institutions, civic organizations and practice, and the role of public opinion and culture in shaping our democracy. The webinar series builds awareness about how we can individually and collectively revitalize American institutions to ensure our country’s democracy delivers on its promise.

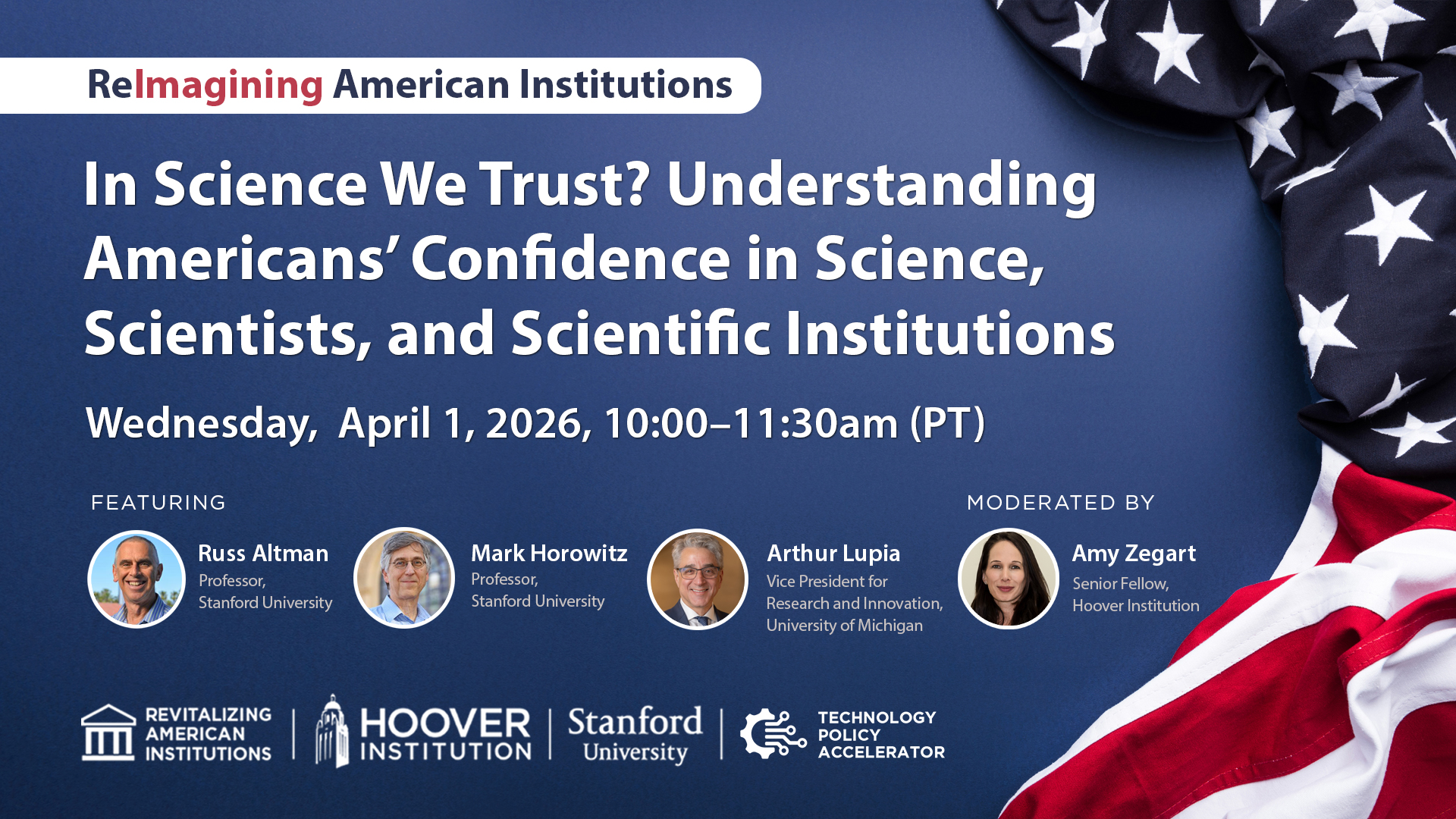

On April 1, 2026, from 10:00-11:30 a.m. PT., the Hoover Institution's Center for Revitalizing American Institutions, in partnership with the Hoover Technology Policy Accelerator, hosted an engaging conversation, In Science We Trust? Understanding Americans’ Confidence in Science, Scientists, and Scientific Institutions with Russ Altman, Mark Horowitz, Arthur Lupia, and Amy Zegart.

This webinar examines how Americans think about and trust science in an era of rapid technological change, political polarization, and misinformation. Through data-driven analysis and discussion, panelists explore trends in public confidence toward scientists, the institutions that produce scientific knowledge, and the social and cultural factors that shape these attitudes. Attendees will gain a deeper understanding of what strengthens or undermines trust in science—and what that means for policymaking, education, and the health of American democracy.

- Welcome and thank you for joining us for today's webinar hosted by the Hoover Institution Center for Revitalizing American Institutions in partnership with the Hoover Technology Poly Accelerator. My name is Erin Tillman and I serve as an associate director at the Hoover Institution, and I'll be the webinar host for today's session. Before we begin, let's review a few housekeeping items following brief opening remarks. Today's session will consist of a 40 minute discussion followed by a 30 minute question and answer period. To submit a question, please use the q and a feature located at the bottom of your zoom screen. While we may not have time to address all questions, we'll do our best to respond to as many as possible. A recording of the webinar will be available on the RAI event webpage of the Hoover website within the next three to four business days, the Center for Revitalizing American Institutions, also known as RAI was established to study the reasons behind crisis in trust facing American institutions. Analyze how they're operating in practice and consider policy recommendations to rebuild, trust and increase their effectiveness. RII Opera Op RII operates as the Hoover Institution's first ever center is a testament to one of our founding principles, ideas, advancing freedom. Today's webinar is co-hosted by the Tech Hoover Technology Policy Accelerator, which delivers research and insights to help leaders understand emerging technologies and their geopolitical impacts so they can seize opportunities, manage risks, and advance American interest. The discussion examines how Americans view and trust science amid rapid technological change, political polarization and misinformation. Our panelists will present data-driven insights on public confidence in scientists and scientific institutions, as well as the social and cultural factors influencing these perceptions. It gives me great pleasure to introduce today's moderator, Amy Zart. Amy is the Morris Arnold and Nor Nona Jean Cox, senior fellow at the Hoover Institution, where she leads the Technology Policy accelerator and the Oscar National Security Fellows Affairs Program specializing in US intelligence, emerging technologies and national security. She's also an associate director and senior fellow at the Stanford Institute for Human Centered ai and a senior fellow at the f Friedman Bogley Institute. We are honored to have three guest panelists, Russ Altman, mark Horowitz, and author Skip Lucia. Russ is the Kenneth Fung professor of bioengineering genetics, medicine and biomedical data science at Stanford. His research applies AI data science and in info informatics to advance medicine with focus on drug addiction and how genetic variation shapes drug response. He's also the founder and editor of the annual Review of biomedical Data science and host of the podcast, the Future of Everything. Mark is the fort founder chair of electrical engineering and professor of computer science at Sanford, a pioneering engineer and entrepreneur. His work has shaped modern digital systems from RISC microprocessors to high speed memory interfaces. Through his co-founding of Rambus, his current research spans electrical engineering and computer science with applications in life sciences. And finally, we're joined by Skip Leia, vice President for research and Innovation at the University of Michigan and professor of Political Science and research professor at the Institute of Social Research. He previously served as the assistant director of the National Science Foundation and co-chaired the White House subcommittee on open science. His work focuses on increasing the public value of research and strengthening trust in sciences. More complete biographies of our speakers are available on the webinar webpage. Thank you, Russ, mark, and Skip for joining us today, and I'll hand it off to Amy to start today's conversation.

- Thanks so much, Erin. Excuse me. I wanna thank Mark Russ and Skip for joining us. I'm sure it's gonna be a great conversation. What I wanna do is begin by asking each of our guests to do it, commit an unnatural act for a faculty member, which is to share insights and thoughts about the subject in three to five minutes only. So, but I have trust, I have trust in science, I have trust in my colleagues. I know they're gonna do a great job of putting on the table sort of data and insights so we have a, a good scene set or a good basis to begin. So skip, let's start with you. Set the table for us about how you're thinking about, you've been researching this issue, you've been involved in the policy space. Start by setting the scene for us and then I'll turn to Mark and then to Russ.

- Sure. Thank you so much for the opportunity. I'll talk about, first talk about universities and then science generally. So there are real questions about trust and universities amongst the public, and there's a pretty good reason why. So about a thousand years ago, the model for the modern years of university was developed and the idea was you take these little clusters of subject matter experts and you put them in close physical proximity with them and other groups and they inspire each other and they challenge each other and they bounce off each other. You put students and community members and they change the world. And it's been a fabulous model, right? It, it's, it's done so much for our lives for about 980 of those thousand years. Places like universities had a near monopoly on the production and distribution of many kinds of information. It's one of the reasons that people my age sat through terrible classes in college, right? 'cause where else were you gonna go to learn this? But about 20 years ago, the internet became a viable source for the mass production and distribution of information. And so it's led to a cultural moment where people look at institutions of higher education and they ask lots of questions, but typically they come down to why should we pay for what you do? Because I can get all this information on the internet for free. And the other is, why should we trust you? Because you guys seem a little different. And so let, let's talk about those perceptions. I've recently led a national academy's effort to do an overview of, of all the studies on trust and science filtered by data quality. So I'm only gonna tell you about what the, the, the, the studies that we can, I, I think validate. So, so there's two main findings. One is we all know that there's been a decline in trust in science, but I wanna put that in context. If you look, companies like Gallup, who actually have great methodology trust in every insti social institution that they measure has fallen over the last 20 years, right? And within the cluster of institutions that are regularly measured, science, scientific institutions, medical science are still relatively high. They're still in the set with the military and largely above everybody else who's measured. But there's something else underneath the data when you dig in it. And the question comes to, who are you guys working for? Are you working for yourselves? Are you working for us? That's a question that society's asking. And I'll give you an, here's an example. Penn has done these, this great extensive data survey collection where they asked detailed questions about what people think about various aspects of science. And here are some findings that report in the NASA paper. So does the public believe that we can train chemists and physicists and people who specialize in English literature? Yeah, we don't have to convince anybody of that. They think universities can do this. But when you start asking questions about do we share the public's values? Do we cut corners to do things like publish papers and draw attention to ourselves? Are we biased when it comes to making claims about top topics like climate or, or politically controversial topics? And that's where we've lost a fair amount of the topic. There's an asymmetry, there's more on the right than the left, but even the left is asking questions. But one of the most surprising things we found when we were looking at the data was when you ask Americans in 20 24, 20 25, what do you think scientists should do? There's actually a huge consensus, like 90 plus percent of Americans agreeing on anything. And they agree on things like, when we find new data, we should update our beliefs. And when we have sort of relationships that could affect what we find, we should disclose them. And you might say, how do Americans have opinions on this and what, whatever. American had science classes basically through high school. So there is a consensus in the country on what people think science is. And so where a lot of the trust problems are coming from right now is they have a sense of what they, we should be doing and questions about are we actually doing that? So again, like in a lot of the larger trust literature at this moment, people look at institutions like ours and they say, are you guys working for us or are you working for yourselves? That provides both challenges for us and opportunities, and I look forward to talking about them in the, in the time ahead.

- Thanks so much. I'm gonna, we're gonna come back to this question of you. Is this just a low bar, right? We know that scientists are trusted more than politicians, right? But you know what's going on with the declining trust in institutions more broadly. Okay, mark, over to you.

- Thank you, Amy, since you said I should be using facts. I have many opinions on this subject, but I'll try to start off by something that I actually know and is factual. And that has to do with how the innovation ecosystem works in this country, which I think is, is somewhat misunderstood. It is true that in many areas their industrial funding of research is much larger than government and they companies do a lot of work in actually pushing things out. But I think what's not quite as well understood is that those company innovations build upon ideas that were created through more fundamental research usually done in a university that may lay dormant for a number of years before pick being picked up. And, and so part of the problem, and it gets back to what Skip was talking about too, is you have a number of people in a university, if you're gonna innovate, you have to create ideas that most people think are stupid. Because if the idea's gonna change the world, that means when you tell it to somebody, they think it's bad because otherwise it's not gonna change the world. Everybody agrees that's the way things are. So you have a bunch of crazy ish people who really are true believers in something and they work on things either because they're just curious about it, or they view the world slightly differently than other people. Now the the truth is that when most people say it's a bad idea, it is a bad idea. But a few of those things actually turn out to be very good ideas. Those ideas then get pushed out that goes into the startup ecosystem of this country. And again, most of those ideas fail, but the ones that actually make it through are things like Google or another company was VMware, which basically changed the whole way servers did. So I can go through, you know, trillions of dollars in industry that's been spawned by people doing various kinds of research. And so the problem is that people will say things are true because they believe them that don't turn out to pan out, but that doesn't mean they're lying or they're not in your best interest. It's because you need some true believers to push, push basically science for forward and to do the unconventional thing that's necessary. So I'm, you know, I under my three minutes, but let me stop there because I, I can go on, but the rest is my opinion and we'll get to that later on.

- We certainly will. But, so I wanna take a little bit of your time, mark and, and push you a little bit on that. 'cause I've heard you talk about this before for our listeners, talk about the fundamental research connection to Google because that's what you were alluding to. But let's make that a little bit more explicit.

- Yeah. So I've been very lucky 'cause I've been at Stanford for about 40 years and through that period of time I've seen enormous innovations in creates essentially Silicon Valley that came out. So in Google's case, there was an NSF funded research on digital libraries. Now the digital library thing was just trying to think about cataloging, you know, information. And then, you know, a couple of very adventuresome students started playing and thinking about, well, what's the biggest information we have? It's the internet. And they started thinking about how to cattle, how to search it or how to find things. And they came up with a much better idea, which is called page rank. And that then spun out to form Google. Now a decade earlier, I think there were a couple crazy kids who were working for a faculty member who was on sabbatical, and that was much earlier in the internet age. And they started cataloging the internet that ended up being Yahoo. Then there was some work that was done, you know, at Stanford on interesting processor designs. And that led to the MIPS computer systems and silicon graphics, which ended up pushing, you know, the whole computing industry. So, and all of these things happened because somebody had an idea that they could do something a little better or they thought there was a different way of doing it. That went, went against the, you know, popular belief. My little foray into this was in high performance memory. We thought that memory bandwidth was gonna be very important and started working on technology for that. And again, pushed against conventional wisdom 'cause we're making the drams more complicated. But as you might have heard, there's this DRAM memory shortage today, and the hot ticket is something called HBM dram, which is high bandwidth memory dram. And that all came out of this push 30 years ago now, 35 years ago now, to thinking that memory bandwidth is gonna be a big problem.

- So related to trust, right? This, this gets to the question of how do we build trust in these institutions and researchers who are doing work where the tangible connection, what's the return on investment to the American people is in the far off future. That's

- Challenge, right? And the, and the other thing is that if you actually have a vibrant ecosystem, many of the things you try will fail,

- Will fail.

- I mean, if you don't fail, you're being very incremental. I mean, that's part of the part of science. And I think that's something that's hard for the public to understand 'cause they think we're, we're wasting their money. And I think that's a fundamental challenge.

- Yeah. Russ, over to you.

- Yeah, so Skip and Mark have really laid the ground on, so I'm just gonna throw in a few thoughts. The first one is, as you said, there is a general reduction in trust in institutions. And that part of that is just pulling down education. And I think that comes from many factors, most of which I'm not an expert at, and definitely I don't have data on, but I'm aware of that. And it's part of the conversation that academics are having all the time about how can we go against that trend. So I'm gonna put that aside. I think there's an issue about communication. The, the explosion of media over the last few years and the lack of media training that, that scientists and engineers get means that it's extremely exciting to have a young reporter call you up about your latest paper and ask you about it. And it's extremely hard for you to not be incredibly excited about your latest piece of work. And it's incredibly hard for that media person not to say, okay, what could this mean in terms of a revolutionary future? And that unfortunate kind of positive feedback loop during that interaction can lead to reports to the public that are exciting for a few moments. But that when you look back on the it as a scientist, you may really regret the level of promise and the level of hope that maybe what might have been a very preliminary finding generated. So I think that we have a media problem, and I think there's many players in that problem, and it conspires to create false expectations along the lines that, that, that Mark and Skip have already mentioned. The, the next thing I wanna mention is that science is complicated and it doesn't always map to common sense. And there's a, and, and as science and, and technology has gotten more and more complicated, it becomes more and more difficult for the, for the public to understand what is being said and what and how contingent it is. Is it definitely true? Maybe true. And that feeds back into, into the first issue. And so education and science education has to be a priority for our society because the, then you can ask the right questions and then you have a sense, and, and, and I think Skip described it beautifully, you don't have to be an expert, but if you understand the process and the contingency of all knowledge that it all could be wrong tomorrow if the right new information comes out, that helps make a, a, a good public dialogue. And then the third thing I wanted to say is the, this question of the scientific bargain. What is the deal? You know, van Bush and others created this post World War II structure for a bargain. The bargain was the government and the public would pay lots of academics to do lots of research. And in exchange they would do research in, in, in support of public, public goals, public missions. And it was supposed to be a good deal for everybody. And you can argue that it has been a good deal, but it needs to be tended to nurtured, curated. And I don't, I don't think universities have done a good job. I think they have, we have started to take it for granted. And so we need to reexamine that compact and make sure that it's updated and that it's still valuable. Because of all this, I started this podcast, which you heard in my introduction, the idea was to have a public forum where scientists can talk like real people about their passion for their work, why they do what they do, what the hopes are for the future, and what the challenges are in getting the work done.

- Okay, so I'm gonna channel, I'm the national security person of the bunch, and normally I look at the downside risk of science, engineering technology. And so Russ, you know, I often get teased in the AI institute that Amy, you think every technology is a weapon. And I say that's because every technology is a weapon. But I'm gonna flip the script and I wanna present some data about the optimistic case of trust in science and ask why are you still so worried? So this is from I'll, I'll skip mentioned a couple points I wanna add to that from Pew and Gallup, 57% of Americans in October of this year, including a majority of Republicans and a majority of Democrats, see the US being a leader in science as important, right? A majority of Americans think it's important the US as a leader in science. And that's gone up over the past three years. Large majorities and both parties, large majorities say that government investments in scientific research aimed at advancing knowledge is worthwhile. Those numbers are going up too. So the trend is going up, not down. Large majorities of Americans say they have at least a fair amount of confidence in scientists to act in the public interest. Back to the, what's the bargain that Russ questioned? Skip made the point that trust in scientists as a group is actually higher than trust in other institutions. Second only to trust in the military, but all those numbers are going down. And then last but not least, a rare bipartisan moment in Congress to reject deep spending cuts both to the National Science Foundation and the National Institutes of Health. Veto proof majorities in both houses of Congress said, we need to keep funding these institutions at least at the level that they currently are. So a rare bit of bipartisan good news coming out of Washington. So with all of this good news about trust and science is actually pretty good, why are we so worried, number one and number two, what are the indicators that you're really focused on to gauge how concerned you should be? Skip, why don't we start with you?

- Yeah. So the read on the politics is spot on. There's strong bipartisan support for research and science. One thing that is changing and that people like us at universities should think about is, is what are we being asked to do? So in the Vanderberg Bush era, there was a, Congress had a willingness to write a blank check, if you will. Like we know the science is important, you guys figure it out. Over time, the directives from government have become much more detailed. And, and again, I think there's a reason for that, right? There's a sense that maybe we can get information from other places. And so one thing to be aware of is, is over the last 20 years, and particularly the last 10, I dunno if I'm gonna do a graph here, but the growth in the type of grants that many people my age got, which were discipline specific, you know, individual investigator, the growth rate of those has been very slow and slowing over the last 20 years. And where the growth has really been in things like arpa, you have darpa, defense Advanced Projects Agency, now you have arpa, e arpa, arpa, I, the TIP directorate. They're more applied program based, cluster based. And part part of that is a bipartisan philosophy that we want innovation faster. We don't really wanna interrogate how basic research works. And you know, the other, the last thing I'll say just from my time in government is, you know, when I meet members of Congress, most of whom are, are very thoughtful. Like the, you know, they have to go in front of constituents and they have to answer the following question. Like, so when I was at NSF, the average engineering grant was $130,000 at the time, that was two times median household family income. And so they were in the position to go out in front of people and say, well, here's a study from engineering. Why is this so important that you'll take the entire year's earnings of two media and American households and do it for that? And so some of the pressure that members of Congress are in are answering that question in an era where for, you know, better or worse, people look at the internet and say, but I can just get a lot of my information from Google. So that's, that's part of the pressure. And I think going forward, you know, making the case for a workflow that includes basic science and applied science as part of an ecosystem that drives progress, saves lives, improve lives, is, is like arguably the best way that we've ever done this kind of at scale in the history of, of, of humanity thinks a, a powerful argument to make. And we just need to figure out how to make it in a new circumstance.

- Ross and Mark, you wanna jump in here?

- Yeah, I just wanna add a couple points. I completely agree with Skip the ratio of fundamental research to basically more directed research has been changing and there's an issue in funding of that more fundamental research. And as I said, those are the seed corn, you know, if you wanna use that analogy for all the more applied research. And if we don't have people sort of pushing at the frontier, it's a problem. Like I, I personally have an issue with the quote, curiosity based research, which is how fundamentals often said. And it's, it's like, it sounds like, oh, we're just like thinking, oh, a butterfly, that would be a nice thing to study. We'll just, you know, or, or something, you know,

- This, it sounds like we're sipping lattes and not doing something serious,

- Right? And, and the, and the point is curiosity based research is basically somebody who has deep expertise in some area has an idea of an area that has not yet been explored and they're curious about what happens there. Or it seems like, you know, there could be some interesting relationship and they're just interested in exploring the frontier of knowledge. Like, so I would prefer exploring frontier of knowledge than curiosity based research. 'cause one seems way more serious than the other, but to me they're basically the same. That they're meaning the same thing. Okay. So that's one point I wanna make. And the second point I wanna make is that part of the problem we have today is that the society is very polarized in information flows. And if you're in a very polarized, you know, information space and you think that innovation requires failures, okay, you're in a very difficult situation politically because whatever you do that doesn't work and you expect most of the stuff not to work will be taken by the polar, you know, opposite, you know, the, the other side as stupidity on the side that actually did it. And you know, this goes in, in all different domains and probably mostly in, in climate is sort of the most polarized right now. And, and it's just, it's one of those situations where science fundamentally has to have disagreements. It is almost never that everybody agrees on a topic. You always have people thinking, no, that's not true. Right? And that makes muds, you know, that's normal. You know, we basically, if 90 some odd percent say X and some smaller percentage say why, we basically say, okay, well this is, you know, the plan of record, but it's very hard in a very polarized society because they will claim each other's are idiots and we can't trust science. So I I'm still worried.

- Yeah, IIII am too, and I'd like to have a a bad news and a good news. A bad news is COVID, COVID was e extremes in both directions. As a technologist, I'm a doctor and a researcher. And as one of those, watching them create diagnostics and vaccines at the scale and speed that it happened was breathtaking and made me proud to be a human. At the same time, there was a big problem in the public understanding of the loss of freedom, the taking away of people's freedom, school decisions, other decisions. And that all got confounded where for one of the first times ever biomedical research, which was almost pristine and above reproach, was drawn down into the fray. And many of us in, in, in my field were like, what's going on? People used to always trust us and, but because of our involvement in, in a big mess, let's, and I think it's fair to call the COVID response a big mess. There was a definite hit to biomedical research and, and and the value of it. And it's, and there was a questioning about the role of it and who's, who are you working for? The question that came up earlier, so that's the bad news, is I think there was a big hit there. And I think it's, it's a lesson to all of us that when big national crises happen, scientists need to stay in their lane. They need to make sure they do the work that's needed, generate the technologies, but they need to also understand that there are other people who are going to then take those results and figure out how to turn that into policy in action. The, the good news is after the end of the Cold War, let's say 1990, we had a period of 20 years, and this is not my expertise, but I'm just, I did, I was alive and I was watching it where there wasn't a focused adversary. And that led to a certain amount of larges and maybe a little bit of laxity and lack of discipline. And in some sense, the good news, and I say this, you know, with quotes, is that we now have a clear adversary. There's a competitor on Earth China that is working very hard in all of my areas and probably all of Mark's areas and probably all of sc skip's areas. And that focuses the mind in a way that makes me optimistic. Of course lots of things have to happen, but part of what you're seeing in the, the actions of Congress and the other good news is we now know exactly why we're doing this in ways that from 1990 to 2010 or so, we might not have been very clear on. And that was my generation being a little bit, getting a little fat. And I use that in the sense of like not staying in, in, in, in good physical shape. So that's a, that's the second thought that comes to mind.

- So I wanna, I wanna drill down a little bit on the, what, what is the race we have against China and what are we talking about when it comes to funding for research? Right? So all funding is not created equal. What we've seen is actually federal investment. That patient long-term investment and fundamental research has actually declined dramatically since the 1960s. It's a third lower than it was in the 1960s. Meanwhile, China is copying that model and investing six times faster. And fundamental research in the United States is so doubling down on the van of our Bush model in China, sort of moving away from the van of our Bush model in the United States. One of the arguments we often hear is, well there's venture capital, there's this thing called the free market. And so there's lots of private sector investment in r and d, but not the same kind of r and d. So for our listeners, talk about what's different about what can only the federal government do, where is private investment going to have the biggest impact, and how do we get to a new model? Russ, you mentioned sort of a new compact so that we actually as a nation are investing and by the way, building trust in those investments in research and development.

- Well I, you know, I, I'm really technology focused, but I have done some work in, in, in industry. And the thing that I notice when I'm in industry, and I certainly have played with venture capitalists is they care about money. I mean, venture capitalists don't invest in technology 'cause they want to grow technology or they want to create some great future venture capitalists are capitalists, they invest in technology because they think it's gonna make them money. Okay? In a similar way, when an industry is doing research and development, they're doing it to make money. The problem is that in the fundamental research and in that, you know, the things, the research that changes the world, generally speaking, the people that do the research or the institution that funded the research does not capture the value, right? Google is worth a bazillion dollars, but Stanford didn't get that money and you know, so it was a greater good kind of thing that happens. And so I think, again, the concept that's important to realize is that we need for society to do this fundamental research, but the institutions who do that research generally don't capture the value because in a, you know, a change the world kind of situation, it's not what the business of the company is. And so generally people leave the company who've done this and they start a new company, students graduate from Stanford and they start companies. There's tremendous value creation. But the, the institution that paid for the research doesn't generally capture the value in these kinds of things. And if you look at that, venture capital's not gonna fund it, right? Industry's not gonna fund it. The only institution that will fund it is basically a national institution because the nation does capture the benefit, right? And in fact, if we have a good ecosystem to do that, and I think the United States had and still has one of the best, it attracts people from around the world who have ideas to come here and the nation benefits from that as well. So, you know, I I think we need to keep that in mind. And when people say, well, well there's way more money in, you know, industry funding, correct? But that money is directed to make more money and it's not directed to basically change the world.

- And if I can just add to that, I, I think a corollary to that is basic science tends to be cheaper than the scaling. So you want to scale an industry when once the technology is proven or the proof of concept is there, you don't want to do that in academia. Academia should not be doing that. If I develop a drug, I should develop the drug, but then I need to get it out of Stanford into a company that knows how to make kilogram quantities of that drug. So there, there is a clear boundary about what is appropriately done in an, in a university and when the scaling laws are just beyond what the university can do. The other thing I wanted to address, which is related to this, is I think you, Amy said, you know, who are you working for? This becomes an issue. Who are you scientists working for? Are you working for us, the public or yourself? And the answer very clearly is both because as an academic, let's be honest, I want to do good science and I want to write papers that my colleagues say, boy, I wish I wrote that paper, but rusted, I'm jealous and I want to get, and I wanna change the way they think about our field. So that's all about me, me, me, me. But when I make a discovery that actually has legs I wanna bring that, I wanna make sure that that gets licensed to a company that already exists or that I start that company to make sure it can scale. And so really it, it would, it's not a fair question who you're working for because of course we have all the same personal ambitions that everybody has, but also as part of our job description is that, is that requirement that we take the stuff that really works and push it out into the world.

- Like just one point on this, like when we think about the role of government, it's important to take a minute to think about how large the US government is. Like if you, if you just think about the, the NIH budget in a single year, there it is between 50 and 55 million, you'd need a $2 trillion fund to kind of roll that off in. So, so it it's, it's very large. And the, the other thing again, as, as Russ and Mark had mentioned is the risk tolerance of a portfolio like that you actually can take some risks over time, build a portfolio and so forth. So one of the, one of the conversations I was in both in DC and here is if you thought about like, let's say you were just trying to build a national research portfolio, there's a way to think about the role of government, right? And it funds this high risk early stage kind of intellectual stuff with the long time horizons. If you are, again, thinking about portfolio management, you'd put that in the federal government, you would then take like the mainline philanthropies and some industry to do like the translational stuff where there's a limited and, and they would fund that. And then for the venture cat, the sort of new venture philanthropy and things like that, there's another set of like low probability risks that you can't justify to the taxpayer but actually could pay off. You know, they, they can do that. And I think for the nation as a whole, if those three entities were more coordinated, had more visibility towards one another, the benefits to the ecosystem could be great. And I feel like things like the genesis mission now at, at, at Department of Energy are an attempt to do this. Like, to rethink how, how we think about the whole mega science workflow and all the component parts and how they could work together more effectively.

- Just to add a, a sort of a point of color to, to make it very clear the scale difference that you're talking about, skip. 'cause I think it's so important, if you think about Eric Schmidt's, you know, moonshot philanthropy to fund science and engineering and he's talking about, you know, giving away a hundred million dollars a year, that's the cost of one F 35, right? So government has scale in a way that the private sector does not, right? And we often forget that. I wanna turn right, skip, you've talked a lot and you've written a lot and researched this question of where does trust come from? So let's dig into that a little bit more and is there something different about what drives trust in science versus trust in other fields?

- Yeah, so there, there are great literature on trust both with interpersonal trust and trust and like entities like organizations or, or society trust is, is always a, a, a a present moment perception of, of future relationships, right? Trust really matters when you're engaging with someone or something and there's risk in the future, right? And so the thing about trust is you can't, like you can ask someone to trust you, but that's irrelevant. People have decide, decide them themselves. And so there are different levels of trust at the lowest level, it's transactional, which is at this moment for this purpose, I have enough of an understanding about you that I trust you to do this thing. But that's actually of limited usefulness when you're trying to take risks in, in science. So there needs to be a different level where I basically need to understand your source code. I have to have a fundamental kind of understanding of, in a wide range of situations, there's something like a, a mantra or a small list of kind of axioms, if you will, that drive the decisions you make. And if I feel like I understand that and I look at you as a person or someone as an organization, and I see consistent behavior with that, that's actually the manifestation of trust. So now in new circumstances emerge if I feel like I understand your algorithm, it, it, it's, it's really great. One of the more pernicious things about social media now, right? Is, is, is how much it eats away at something like that. Because as Mark was saying, like I could make what you, you might call a, a mistake or I could do a study that doesn't work and you could say, well it doesn't work, it skip is really stupid, right? And the, you know, the under, if, if part of why people trusted me is they thought that I I was applying intelligence to problems, it really eats away at that. Whereas if we're in a community where it's like, no, you know, skip was trying to do a thing and there was an underlying theory and we all knew there was a chance it wouldn't work. But the reason to do this work is if, if if we learn that it doesn't, then a thousand other people never have to make that mistake again. And if it does work, you know, we're gonna save a thousand lives with, with the same amount of effort that we would've saved one today, right? So that's like, that's the type of narratives that, that facilitate trust. But I will say that social media makes that kind of trust really harder to, to maintain because it's so easy to take a thing that I do and turn it into a, a, a stereotype that just undermines, you know, the ability to have confidence.

- So if we, if we sort of broaden the aperture a little bit, we've talked a lot about trust, let's disaggregate trust in science, trust in universities, or is there something else going on? You all have talked about social media, the, the sort of media environment we're in, the environment we're in. I'm, I, I keep thinking about research that our colleagues, Doug Rivers and David Brady did, you know, they do this election, you know, longitudinal polling. And I remember in the last cycle, Brady said for the first time, typically you think people vote for president based on how they feel about the economy, right? The economy is a big driver, a because about who your selection for president this last cycle, they saw the opposite for the first time. How you felt about your political party actually influenced your view of the economy, right? Whether it was do whether the economy was doing better or doing worse was a factor of your politics not the other way around. They hadn't seen that before. So I'm curious to get your perspectives about do you think polarized politics are influencing how people view science and trust, number one and number two, do you think there's something different going on about trust in science versus universities versus this expression of identity and culture and where you belong? I know I've thrown a lot at you all, you can take your pick.

- So I, I want to be careful to talk about stuff that I actually know about. So, because this is good stuff that I, I'm interested in, but I, I do think that there is something going on here where in the old days, let's say the old days, let's pretend that they were good old days.

- That - Would be yesterday if, if you, if if it was hard to propagate, if you had a view about something that you wanted to be true that would help you in the world get, get a, achieve your goals, we, you know, you wanna make money, you wanna be elected to office. And if there was, if there were scientific facts or scientific theories that were problematic, that was a big problem because you would have all of these people saying, this is a science is not, is kind of going against what you're saying and we can't be a hundred percent positive. But what you're saying is unlikely to be true based on what we know. It would be contingent, but it would be hard. Now that's not a problem. If you need something to be true and it's not, it's easy. You, we know how to do this. You get on your social media, you figure out what your bots are gonna say, you flood Facebook, you flood the, the Twitter type platforms and you can create doubt so that you can push your agenda forward. So the ability to make, to make science almost irrelevant to many policy debates is easier than it's ever been. And that causes me, me, as a scientist some concern. And I think it changes the nature of what people are willing to say in public because they know that there's a toolkit for making sure that they won't get, they won't be held to the scientific standard because you can always inject out in the ways that skip just described.

- So let me follow up with you, Russ on that and, and ask you to comment on an area where you are an expert and that is ai. So yes, as we think about what happens to trust in science when the scientist is no longer human, right, when you're using your chat bot, and by the way, you can have your chat bot have the personality you want or the accent you want or the language you want. So you feel like you're more, you have more affinity with that chat bot. What happens when your queries about, I wanna believe this and your sycophantic ai friend

- Right?

- Is is not even human and that's the scientist. What do you think is likely to happen?

- So, so I'm gonna, I'm gonna disagree with you be, and, and I think you probably know I was gonna say this, you are not allowed to call AI a scientist right now the working definition of scientist is integral to having a human being be the scientist. So yes, there are amazing power tools that I am and all my students are using every day. I'm guessing Mark and all of his students are using every day. I'm guessing skip, but there's a professionalism principle here that until further notice, the scientists are humans who take full responsibility for what they did with the AI and a lot. And, and, and, and therefore if the, you never are allowed to say the AI did bad science. If you used AI and got a bad result, you did bad science. And that's a basic principle of professionalism that we, it it appears like people are on a slippery slope there. And I think we have to stop that. I think that actually applies to every single profession that's using AI is that unless you want absolute chaos, it has to be human responsibility for the outputs of, of ai. And I know that there's many cases where that's not happening and that's eroding public trust because the public is assuming that scientists are using AI in responsible ways compatible with the compact that we have with the government and with our funders. And the moment you say that AI is a scientist that can do its own thing without supervision, the goose is cooked. So I'm sorry you gave me the opportunity to give that big speech and I probably used up all my time, but that's very important.

- I asked and you answered. Mark, you look like you wanna jump into this conversation too.

- Well I just wanted to say that you asked the question, is polarization a problem? And I think polarization is an enormous problem. It's a problem for exactly the reasons that Russ and you pointed out is that there are contrary views generally in science. It is very easy to, if you're polarized to pull the one or two studies that said something that have been discredited later, but use them as basically the, the science. And it's very hard if you're not an expert in an area to, to sort through or to know what the preponderance of the evidence is to use a legal term. And, and so people get confused and then because they hear scientists argue both sides, they just figure, well maybe people don't know and they can decide whatever they want, right? Or they, they choose the, you know, the view that agrees with what they wanna believe. Where if you took a step out and just looked at the, you know, took a wider view, it'd be very clear what the answer is. So I think this is an enormous problem and I think the politicians and the people who have a point of view play it. Now again, this is made even worse by the fact that many of these issues are now business decisions. People are buying for money, making money on these different technologies. And so they will spend things to make their product seem better than other products, which has traditionally happened. You know, it always happens in the market, but now these are highly technical projects prob you know, products. And so they basically say something about science, about those products and that further confuses the whole thing. And then if I'm just gonna pile on the worst part, you know, that makes the thing that ha makes this all happen is that we really are in an intention economy, right? That the, your, your currency is how many views you have and that causes, let's just say the more extreme views to get lots of, you know, to be promulgated. Where in general science is usually a little boring. I mean, it's not so exciting. And I think all of these things, you know, join together to make people have ultimately less faith in in science.

- Can I add a point just on on, on Mark's point for almost all of human history, the baseline assumption we would have about any human and any topic was they wouldn't know the answer to a hard question and they had no way of finding out, right? I think, and you know, I think about the first day of the May 10th, 1950, when the National Science Foundation was, was created, the average American household had 12 to 15 books in it of any kind, right? If you lived in a city, you could go to a library and get two more, right? If you wanted to remember anything about it, you had to use a notebook and, and ballpoint pens weren't mass produced until the 1950s. So typically it's a quill pen on really bad paper, right? That was the, the state of how we knew things and now we're in this different environment. I, I think there, there's a fundamental adaptation that universities have to make. I think there's this belief that we're in the knowledge production business and that's because like at one time there were only five articles in chemistry and then someone wrote the sixth chemistry article and haa we have new knowledge, but now there's just like knowledge production everywhere and, and AI is just gonna amplify that. So the world isn't gonna kind of reward us for producing information, but the thing that we can do, because we can convene because we're human beings. 'cause we, we can legitimate knowledge if we have the right processes, if we operate with a certain type of transparency, we have a legitimation capacity that's really unrivaled throughout the world. You might be able to create some of it in ai, but even that's probably only in certain fields where you can validate certain types of stacks with respect to certain types of knowledge bases. If everyone agreed to it and if everybody understood the coding. But since that's unlikely, there's so many domains of knowledge where the convening power of universities where the back and forth we have to interrogate claims. Like that's the, that's the sort of secret sauce for us. But I feel like just, I think sometimes universities have been slow to adapt and we're hoping the world will come back to just rewarding us for producing more information and that ship sailed. But the but the, the lane that's available to us is pretty exciting if we, you know, if we look at it the right way.

- So let's spend a little more time on that. 'cause we've talked about problems. Let's talk about directions for solutions of what universities need to do. I mean, I often joke that China invented bureaucracy, but universities perfected it, right? We are a very slow changing set of institutions at a time where technology is disrupting so much and we are training the next generation and that part of it, not just the knowledge production, but the training, the next generation, the human capital piece has to adapt to. So Russ, I wanna start with you 'cause I know you're on, you've been as penance for your sins been appointed to a Stanford high level committee to think about, right? How to communicate, how to adapt the university, how to reimagine the compact with the federal government. Share your thoughts on what do you think we inside the academy need to do very differently, both to adapt and back to our question about trust, to restore trust in universities at this partic in particular at this political moment.

- Yeah, thanks. And, and you know, skip had the high level idea exactly right. He said something that trust, deep trust depends on people understanding the DNA of of how you work. And so I think the first thing that universities and scientists have to do is think about the details of how we work and say where has there been like an erosion of what would be the, the ideal set of processes? Processes. So for example, are we debating ideas or are we taking existing models as the reigning model not to be challenged. And so you have to inculcate in students that when there is something that we're almost positive is true, that's the thing to go after. Because if it's not true, that's a big deal. So all knowledge in science is contingent. And what I mean by that is everything can be disproven tomorrow by a clever person coming up. And that's both a strength and a weakness. It's a strength because it's true and it leads to a more robust set of understandings. It's a problem because you, if you take that glibly as a, as a, as a non-scientist, you can say, well that means that everything could be wrong. And it is true that everything could be wrong, but we're all working extremely hard or we should be. And so an analysis of the degree to which we are questioning all our foundational principles constantly is something that has to be renewed. We have to make sure that the financial model is justifiable and that the, it's easily understood this that does need to be easily understood. You might not understand all the details of plasma physics, but you need to understand that when the government gives money to a, an academic, how is that money used? How is it stewarded and is there a reporting level that makes everybody comfortable? Anybody in the real world, I'm, I'm an academic, I don't call that the real world. I'm not that stupid, but people who have real jobs in the real world know that there's ledgers and there's receivables and there's payables and, and we have a language that we've started to use in academics that is so foreign from that vocabulary that it creates barriers and not trust. And then the third thing, because three is a biblical number is reproducibility. There is a reasonable expectation that we pay attention to the reproducibility of our work. I think this is sometimes used against us and fine, but the fact is there's a couple of things going on. There is a laxity that I've seen in my career in peer review. So peer review is the key thing in science. We get an article that, that one of our colleagues wrote, we're not conflicted with that colleague, we don't really care, but we read the paper and we write a review for an editor of a journal about if this paper looks like a good contribution because life is complicated and life has more distractions than it used to be, I believe. And I, I think there's some data that the quality of peer review and the rigor has gone down and that is death for science. So we, it needs to be evaluated. So let's, let's separate whether there's actually a problem. It needs to be constantly renewed and reexamined because it's the, it's the bedrock upon which scientific claims are adjudicated. The other issue with reproducibility that I just want to add is that sometimes it's not a question that it was a fraudulent or they made up the results. That's a very small and terrible proportion. It's just that they did an experiment that is so specific that what they found is true, but just kind of not relevant and not useful to the world. We know in physics force equals mass times acceleration. And that is a, it, it is a very robust observation that has withstood tests for decades. And in fact, centuries when people publish papers in biology nowadays, sometimes it's not that it can't be reproduced, it's that the level of detail that you would have to do to get exactly that same result means that it's a house of fragile cards and it really is not a robust finding that we should move forward with in the future. So these are again, true but not very useful or relevant and then that's a big part of our reproducibility crisis. So those three points come to mind as things we need to work on.

- Mark, skip, wanna add to the list things we need to work on.

- Can I, I, mark, do you wanna go or No, go, go ahead. Yeah, I mean, I think just rest said was brilliant. I, I like, I want people to think about three different concepts, right? And one is the scientific method, one is a scientist and one is science, right? The scientific method, I'm gonna, I'm gonna throw out it's, it's unassailable. Like it is a way to evaluate conjectures about cause and effect categories, existence and so forth. And the key thing about it is, if you follow it, it's inner subjective. And what I mean by that is you don't know whether the thing you find is true or false, but if you are transparent and specific about how you do it, any person who followed that method should find the same thing you do. And that's the leverage that science has, right? So that's like the scientific method. A scientist is a furry, highly flawed person who, you know, spends a small part of some of their waking hours using the scientific method to discover things, but the rest of the time they have an ego and they have needs and things of that nature. And so that's important to keep in mind. And science is largely a pop culture phenomenon that involves a scientific method and has scientists in it, but people can do, people claim all kinds of things in the name of science. But for this endeavor in which we're all engaged to have maximum social impact and to save as many lives as possible, any time that we can go back and hue to the scientific method, right, and hue to the inner subjectivity. And I'll just give you an example of where it didn't work and how we can improve it. Follow the science, take the vaccine, right? So there's two parts of that sentence, right? Take the vaccine, the scientific, the scientists did this incredible thing where they looked at the COVID vaccines and the distribution results were found. And for most people it had this incredible effect. And for some people it had a bad effect. And then there are other things socially involved with taking vaccines. That's what the scientific method can show you. But then there's this question of, well, should you take it? And then there's all kinds of moral and ethical questions for which that scientific method I described is like just not well equipped, right? But when we fused them, it just caused a lot of confusion. And that's this time when the scientists who like had, we had our ego and we had, and we had good intentions that like we, we, we misrepresented the scientific method and then caused a problem. And to Russ's point, things like reproducibility, our commitment to serving people through, through the method is one way to just demonstrate what we're trying to do. And it's a way to build trust.

- No, I, I, I don't have too much more to add. You know, the only thing I would add in, in terms of this, the scientific method and, and viruses and vaccines is, you know, you could just leave it to the capital system. And, you know, from a return on investment perspective, people who take vaccines are less likely to get sick. So you could just change the insurance rates or health insurance costs, whether you take the vaccine or not. It's your choice, you know, but Right, because the way science works, a scientific method would deter, would determine that the, you know, expected health costs would be higher on average for people who didn't take vaccines than did, right? I think that's still part of the scientific method, right? But what you want to do, that's, that still could be your choice. So there are ways that, that, as, as Skip said, we could have mediated this better and disentangled that it gets a little more difficult with global phenomenon that have externalities. And that then that, that becomes a, a more complicated.

- So I wanna bring into the conversation something that we haven't mentioned so far. So it gets to political polarization, but when we look at ourselves and our institutions, we see that in fact they do not represent the views of America, right? That there is a dramatic skewing of political perspectives in universities, which we know well heavily skewed on one side of the political spectrum, which some would argue influences what you take for granted, what research is considered valuable, what questions are asked, how free people are to actually challenge each other in a classroom, in a seminar to say, I don't agree with you. Because if you're the only one in the room who holds the views, you do, it's a lot harder to disagree. So how should we think about the political skewing of universities and the current environment where the administration is saying, you know what, it's not okay to have to have this environment where in particular, certain groups are not considered, not protected in the way that others are. How, and we are sitting, this is the elephant in the room, this is a very charged moment for universities. I'd like to get your thoughts on how this all intersects with trust and science and what we do about it.

- Well, let me just point out, since we're talking some facts, it turns out that the split in, in view is highly skewed by education level as well. Now, I don't know if it's cause or fact, but if you look at people who have PhD degrees, degrees, they're highly skewed in the same way that academics are highly skewed. And since most academics have PhD degrees, I, you know, that we can say that there's a problem that that skewing exists in the first place. But if I'm hiring from a pool of people that have, of

- Course, right PhDs, right?

- They're gonna be skewed

- That the, that the university environment is reflective of the pool from which it's drawing. Got

- It. Exactly. And the second thing is

- We, the question is, does the, is there an effect there? And if there is, how concerned are you about what that effect be?

- You, you know, when I interview candidates for, you know, positions in electrical engineering and computer science, the questions of what your political views are never come up. So, and I think it would be inappropriate if they came up. 'cause it's really not germane to the part of the computer, you know, in the fields that we're interviewing. So like there is this problem, I'm not sure in the domains that I work in, it's a very serious problem. Maybe other people would disagree with me, but

- I can talk about it a little bit. And I'm at a public university, so this, this lands a little bit differently here, but I, I wanna start, like there's a, there's a field in psychology that studies how people perceive each other. And there's this notion of ingroup outgroup. And so if we're part of an ingroup, if we're part of like, let's say people are liberals and most of the contact you have are with liberals. Like you can form this perception. And the perception is the liberals, like I, I know people who are liberals, but they're all, they come from different parts of the world and they think about liberal ideology differently. And so our group, like even though it's liberal, it's very, very, very diverse. Now, if you never meet conservatives, then here's the next part of it. And they're all the same. And they think very simply, right? So this is a, this is a common phenomenon. And so one of the things that happens on the left is the left constantly refers to the right as stupid, not because they have actual evidence of it is because they actually don't interact very much. And so you can form the stereotype and by the way, on the right, similar things on the left, but that's not like a new phenomenon that's just like ingroup outgroup. And it's been going on for as long as human beings have, have, have gone in groups. And so now, like to this point when you say, well, when we're hiring at universities in technical fields, there's an, there's a ide ideologically correlated selection on who gets PhDs and that's fine. But when, if, if we were only technical institutes that only gave technical advice and then we walked away from everything else, maybe everything would be great. But we're at these universities where we tend to give other advice about what trade-offs you should make, whether we do it as an institution or whether individuals do it. Now people are saying, you know, universities are providing a lot of advice about what trade offs we should make and how we should live. And again, being trained in the scientific method, as awesome as it is, may not give you any special benefits when it comes to moral and ethical knowledge, which are the ways that societies evolve a lot of trade-offs. And I think part of our current moment is sometimes will do things like follow the science, take the vaccine, and people are like, wait a minute, there's some other considerations here. And we're like, we, we don't get that. And so I think that's part of like how to adapt again at a public institution we have more lanes of feedback where we can, you know, try and hear from lots of different people and you never give up your integrity, but you try and treat everybody with respect. And then you talk about what you're doing with respect to the, the kind of portfolio of views and that, that's sometimes hard to do. But I think you have to try, because the worst case scenario is when we don't try and we assume that everybody who isn't us is stupid and, and then we like, that's a disaster. And I think that's part the perception that that's what we're doing, which unfortunately is based in reality to, to some extent is, is is a big part of the trust problem we have. Now,

- Lemme just take the opportunity to give a shout out to my Hoover colleague Josh Ober, who's a political science professor. He, yeah. The, the, we political scientists know each other who has spearheaded this wonderful civics initiative here at Stanford where you can volunteer to add your syllabus to the website that says, you know, I really value the, you know, the debate I really value to Russ's point, we should be interrogating all these ideas. That's the class I'm setting up. That's the, that's what the culture that we have. And so students can be more savvy shoppers of the courses that they take and they can self-select into a community of students where they want to have that exposure to different ideas in the give and take. So there's, there is work being done, but there is work to be done.

- I was just going to add that there are great lessons from World War II and, and, and Korea where because of the large number of, in this case it was mostly men, but it was also women who were mixing from all over the country. It created a better understanding of people's perspectives and it actually led to a lot of good things is my understanding from the literature. And I do think that college and university should be a place where you meet people from all over the spectrum and it's a failure of the university and the, or the college if it doesn't provide that experience because that's so valuable and so important just to, and so I, and I believe there's empirical evidence for this. And so to the degree that this is too homogenized or a monoculture, it's a disservice to the paying customers. Right.

- Right. And I will just add in the end that even though we're highly skewed, we're not monolithic and you know, in the faculty there are differences of opinions that come up, maybe not as experience,

- Maybe experience maybe Mark you've had the same thing as in a faculty meeting. If you have two faculty members, you have five opinions. Right. Okay, let's turn to some listener questions. So this is a question for you, mark. It says, thank you for your point of view. I agree with it. So it must be a very good point of view. Most academics engaged in public conversations about the payoff of federally funded research come from the non-ST STEM side of the enterprise at Stanford and elsewhere. Right? They're unable to articulate your view with the same persuasion. So the questioner asks, why don't the STEM departments or universities like MIT take a more active role in leading the public conversation on these issues? Let me add an addendum to that question because you are taking an active role in the tech policy accelerator in our emerging technology review, and you are going to Washington to make these arguments. So why don't more people do that and why do you do it?

- Probably the reason more people don't do it is that we tend to be pretty busy and pretty enthusiastic about the work that we're doing. And so, you know, if we want to change the world, we think probably not correctly that we should just focus on our work and get it done. And dealing and talking with people from outside of your community is an enormous reach. I, Russ knows this 'cause he's done it, I've done it. But you know, I I've been working in kind of interdisciplinary areas for a while and you know, I would talk to people I on two sides that would like to work with each other. And they both say that they're standing out there and they've made all these great offers and nothing's come back. And when I talk to both of them, they both have like dinosaur arms. Like they're not, they're, you know, they think they're stretching, but they have no conception that the other group doesn't think of research in the same way that they think about research. And it's very hard. And so to do this kind of communication takes not only deep technical knowledge, but a really willingness to become a student and watch people and figure out what they're thinking and how they think. Because to communicate you really need to bridge the different perspectives. And it takes effort. It takes a lot of time. Why I'm doing it is, you know, I I guess I think that the benefit is really large and it frustrates me when people argue about things that they really shouldn't be arguing about. It's just not a communi, it's a lack of communication. So I've decided to spend some of my time, which I is the most precious thing. I have to do this, but I think many people in the STEM areas are very busy and they're very focused on their research and it's, it's basically time away from their research. Yeah. I Rus do you have something else that you would say there?

- No, I mean, part of this question is whether the, the non STEM colleagues, are they making the best case for their work? And you know, I love being at a full university. I, I I, yes, I spend most of my time in the school of engineering and the school of medicine, but some of my most best, some of my best conversations and my best interactions are with English professors and art professors. And it enriches not only my life, but my students' life. So we need to get that across because being at a full university where every area of human endeavor and knowledge is represented is a huge benefit and a huge privilege. And I, I think the question asker is right. We don't make that case nearly enough that even us techies love having those people on our campus and they enrich the entire e and they don't enrich just our lives, they enrich our research,

- Right? I mean, I I was talking in one of these interdisciplinary things with somebody from I think classics and they were, you know, we were just talking about societal issues and they just pointed out that in every story you've ever read, bigger is better, right? In the story, you know, you grow, you do something. So if you're trying to deal with sustainability, you're saying, you know, maybe not bigger is better. It's very hard story to tell because it's not the story that you've ever read in any of the, you know, the texts. And I just thought that was wow, you know, a very interesting point that sort of communicates a lot of things that I would never think about. So just, I completely agree with Russ.

- So I, I will say, Russ, I'm a little offended that in the, in the realm of all the types of disciplines that you talked about, you did not say at the top of your list political science, but we're gonna let that go. But what I would say is, from the perspective, and I'm sure Skip, I'd love to hear your your perspective on this too. From my perspective, as a political scientist doing work in national security, our impact is directly influenced and augmented by a deeper understanding, which only our technical colleagues can provide about these emerging developments and how they could shape the world, both the promise of these capabilities and the pitfalls or perils of these capabilities. So Russ and, and Mark have heard me say this a lot, if it's only political scientists like me opining about tech policy, we're all in trouble, right? We need to have people with a deep technical understanding of how these technologies work to inform better policy. So it's not just being part of a full university benefits the techies, it benefits the non-techies and the policy engagement that we do. Skip. Is there anything you wanted to add to that?

- Well, I just, I want to distinguish between two things. 'cause there's the act of telling the story about the value of research and it it's great and people put together, I wanna differentiate that from what someone hears when you make that presentation. Because ultimately the purpose of any presentation is to form a new memory or alter the set of existing memories between the set of per people that you're, you're trying to go at. And those can be two really different things. So I'm, I'm just gonna cut to an example that I have. I have met hundreds of members of congress, hundreds of times, and I have a strategy going in. And the strategy is I'm not gonna ask them for anything, right? I'm not gonna ask them for anything. Instead, what I'm gonna do is, is I'm going to understand everything they've talked about in public for the last six months. And you might think that's hard to do, but if you're a member of the United States Congress, you, you tend to talk about the same things a lot. So you, you can figure that out. So, so that's the first thing I know. The other thing I know is on a day when they're meeting me, they're probably meeting at 10, 15, 25, 30 other people and they're gonna be very nice to me. And, and what they remember might have to do with what we spent seven, the 30 minutes together. But what's probably gonna happen is at five 30 or six o'clock or six 30 when they meet with their staff and the, the member politely asks some version of what the hell happened today? And then they go through the day and try and figure it out. You want to be the person who puts something in the room, so they call you back. But typically it's not gonna be talking about how great your research is 'cause 'cause the they don't have, right? And so because I know what they care about, I can, with complete sincerity and integrity, say, thank you member of congress for doing A, B, and C, it's a great service to the nation. And then they might talk about B and say, yeah, B is really important. And then in my back pocket I had this note like, did you know that you know, Stanford is actually doing this work on b and in the last couple months it's done this thing. And if I say it the right way, the staff leans in and the member leans in. Like, can you gimme more information about that? And if you can pull that off two or three times in a meeting because you are focused on answering the questions that they're, they're asking in public. So instead of trying to sell them something, you're helping them do the thing they say, they, they call you back. And when they call you back, it's a different conversation. And if you ever wanna have a conversation with anybody in policy where you ask them for something, it's that call. It's not the first one. But if you come in sort of selling your latest paper, people will be polite to you and they'll say great things to you and the next day they won't remember any of it, right? And so I just think that's a, a skill to think through.

- I'll just share and then I, I wanna make sure we, we get to some other questions. When a bunch of us were in Washington with the Stanford Emerging Technology Review last month, it was very telling that the senators and members of Congress and their staff that we met with said over and over again, and I think this is true, academics tend to think about the data and we're really, we're really nerdy right? About what's the statistical significance, et cetera, et cetera. But what convinces most people is the story. And we need to talk in language that communicates our value proposition to our stakeholders in Congress and the American people in a way that lands with them. Everybody remembers the story. Nobody wants to hear about the statistical significance or the quantitative analysis. As lovely as that is, as important as that is, it's not persuasive. Okay? Question would be interested in the panelists views and whether part of the problem is the phrase the science says when in fact, as Professor Horowitz and others have touched on, science is about openly contesting ideas in an evidence-based way. So part of the problem might be a misunderstanding of most people, and especially in politics and policy about what science is and science isn't. What do y'all think

- My first thought is? Yes. Period. New paragraph. That is absolutely true. And I think my COVID example showed what, when people start getting confused, science doesn't say things. It's just like AI scientists do things and scientists have theories and they have data and they have ideas about what might be true and with different levels of confidence, but that never translate directly into a policy. There's a whole layer of social debate that has to happen before a scientific theory or fact is used as the basis of policy.

- But Russ, we hear often in the political debate, follow the science.

- Yeah, I like that,

- That science says that

- I don't like follow the data, follow the data is even worse than follow the science. And we can get into this, but data, you know, is, is raw, it is unprocessed, it is filled with noise, it is filled with problems. The, the job of the scientist is to, is to take a look at that data, apply theories, and then come up with some contingent conclusions. So I think we need, this is kind of what I was saying before, is when we're dealing with media, when need to change the vocabulary that we use when we're talking about our findings, and it's gonna involve humility. Another thing that is not the most common resource on a campus, but I think we need humility and we need openness to debate and, and challenge. And it means that we're gonna sound like less productive. And sorry, that's how we probably have to sound out.